Trends in AI

AI Governance and Impact on People

Vídeo: Trends in AI

Table of contents

Complete document

Access to GenMS™ Sybil

The accelerated adoption of AI poses a challenge that goes beyond technology: how can we govern systems that evolve faster than organizational structures, transform professional roles when tasks change quarterly, and preserve human judgment in critical decisions while delegating routine operation to autonomous systems?

This section addresses the organizational and human dimensions of AI: it examines the corporate governance models that are emerging for end-to-end AI oversight, the advanced operational practices for industrializing AI deployment (MLOps, LLMOps), and the ongoing transformation of professional roles and profiles. It also considers AI’s transversal adoption across sectors, its impact on daily life beyond work, the sustainability and social implications it introduces, and the operational ethical frameworks needed to translate abstract principles into concrete controls and continuous auditing. The question is no longer whether AI will transform organizations and people, but whether we will be able to govern this transformation with rigor, speed and responsibility.

Corporate governance of AI

AI overflows traditional frameworks

Traditional corporate governance models were designed for predictable technologies: systems that execute deterministic logic, operate within defined boundaries and behave in a reproducible manner. AI breaks all these assumptions: it makes decisions without human intervention, produces different outputs for the same input, operates through opaque internal processes that its own developers do not fully understand, and is critically dependent on external suppliers whose models evolve without direct organizational control.

Traditional technology governance structures are insufficient. Architecture committees and annual approval cycles are too slow for the speed of AI innovation, lack the expertise needed to assess its specific risks, and are not designed to manage the uncertainty inherent in systems that learn and change. The strategic question is how to govern AI without slowing the speed of adoption and accumulating unmanageable risk.

Organization: emerging roles and structures

Organizations are creating specialized executive functions, albeit with uneven progress across sectors and companies. The role of Chief AI Officer (CAIO), Chief Data and AI Officer (CDAIO) or equivalent is emerging, with an estimated 26% of large organizations already having this role. In more mature organizations, the CDAIO reports directly to the president within a strong matrix structure across countries and business units. In other organizations, responsibility for AI leadership falls under the CTO, CIO, or Chief Innovation Officer.

Below the executive level, specialized roles are emerging that have not yet been standardized: AI Risk Manager, AI Ethics Officer, AI Compliance Lead, or Responsible AI Lead. These profiles usually report to second-line functions, but their responsibilities, authority and resources vary substantially across organizations.

In the first line of defense, the AI Center of Excellence (CoE) is consolidated: transversal teams that bring together technical knowledge (LLMs, multi-agent architectures, frameworks), shared infrastructure (cloud platforms, GPUs, licenses), the issuance of guidelines and standards, and internal consulting to business lines. Operationally, the hub & spokes model predominates: a central Center of Excellence establishes transversal capabilities, while decentralized teams in business lines develop specific solutions, reporting hierarchically to their managers, but with cross-functional reporting to the hub. This structure allows for different speeds depending on the maturity of each line while maintaining overall architectural coherence.

In the second line, organizational maturity is notably lower. While the first line has consolidated structures, the second line shows high variability, and there is an open conceptual debate as to whether "AI Risk" should be treated as an autonomous risk in the corporate taxonomy or as a cross-cutting amplifier of existing risks. Very few organizations define it as a first level risk (as a tactical decision to ensure visibility and budget); more often, it appears as a second level risk within Model Risk or Non-Financial Risks. Others do not include it formally and treat AI as a cross-cutting amplifier of existing risks (increased model risk, increased vendor risk, technology risk with additional vulnerabilities), reinforcing existing frameworks rather than creating new taxonomies.

Regardless of the taxonomic debate, a small coordination function emerges operationally, often called "AI Risk" or "AI Governance", which orchestrates risk assessments by specialized functions: Model Risk validates models, Technology Risk assesses infrastructure, Cybersecurity analyzes AI-specific vulnerabilities, Data Protection verifies privacy, Legal assesses intellectual property, Compliance verifies regulatory compliance, etc.

Governance bodies: from formal committee to operational working group

Virtually all systemic organizations have set up an AI Committee with a three-lines-of-defense composition: first line (innovation, analytics, data, technology), second line (model risk, operational risk, cybersecurity, data protection, vendor risk, legal, compliance), and third line (Audit, as observer). The committee is usually co-chaired by a first-line and a second-line manager.

However, governance does not take place only at the committee level. Beneath it there is usually an informal but critical structure: an AI Working Group composed of those who report directly to the committee members. They prepare materials, align positions, and resolve conflicts. As a result, issues reach the committee “for information” or “for approval,” rather than for discussion from scratch. The real governance (negotiation, consensus-building, and the resolution of tensions between speed and control) occurs in this pre-decisional layer, where the first and second lines build operational agreements before formal approval.

Risk framework architecture: backbone and sectoral uplifting

Organizations do not reinvent their risk frameworks from scratch; instead, they build on existing structures. For the AI risk framework, this approach produces a two-tier architecture: a newly designed AI-specific backbone (a brief AI policy setting out principles, scope, roles, risk classification, and approval processes, together with an AI procedure that comprehensively describes the lifecycle from ideation to production), and above all the uplifting of existing specific frameworks.

Uplifting consists of complementing existing frameworks by adding specific AI chapters. The Model Risk framework incorporates generative AI validation, explainability techniques (SHAP, LIME, attention mechanisms), bias and hallucination detection, drift monitoring, etc. The Vendor Risk framework adds due diligence on LLM vendors, required certifications, specific SLAs, contingency plans, or exit strategies. The Data Protection framework includes AI-specific impact assessments, data minimization in AI systems, and exercise of GDPR rights when processing involves AI. The Compliance framework implements the AI Act: risk classification, registration, documentation, etc.

This architecture leverages infrastructure that works, assigns clear responsibilities, and maintains consistency without fragmentation. However, some technical challenges are genuinely new. Explaining deep neural networks with transformers and trillions of parameters requires specialized techniques that did not exist in traditional model validation. Risk functions are developing these capabilities in real time.

Risk classification and lifecycle

The classification system in the European AI Act (prohibited, high-risk, limited-risk, minimal-risk) is necessary but insufficient for effective corporate governance. Regulation is designed to protect society and fundamental rights, not organizations against their own operational, reputational or financial risks.

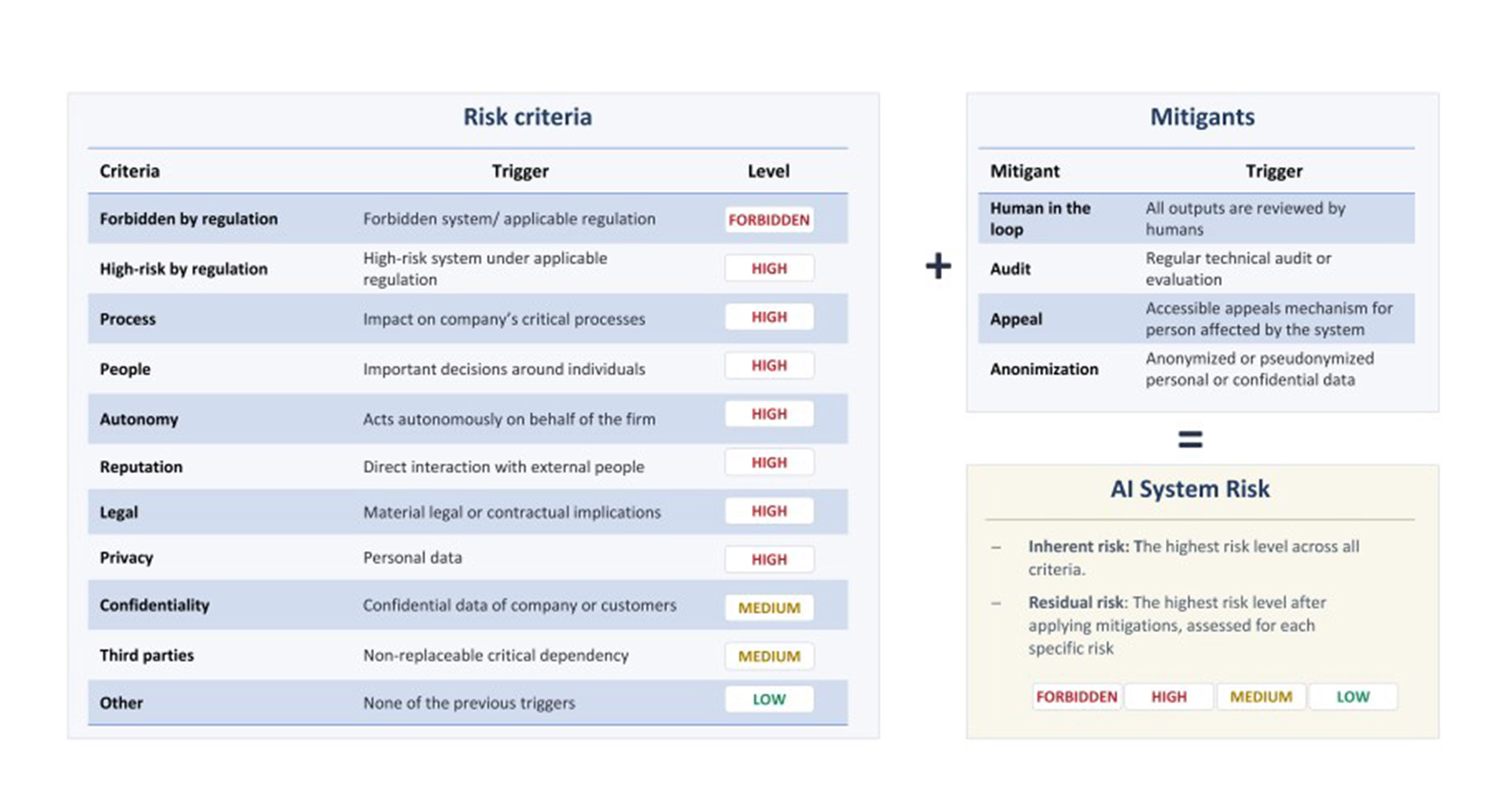

Therefore, organizations are converging towards more demanding internal AI risk classifications, which integrate regulatory criteria with their own: reputational impact, process criticality, cybersecurity classification, model tier according to validation, direct financial impact, and supplier maturity, among others. A system may not qualify as high-risk under the European AI Act, yet still be internally high risk if it affects critical business processes or creates significant reputational exposure (Fig. 4).

The classification under the European AI Act determines the operational consequences. For example, high risk implies a thorough analysis of all risk functions, formal committee approval, full documentation, independent validation, and intensive monitoring. By contrast, low risk usually implies a fast-track process, light analysis, and escalation to the committee for information only. The fast track is a critical element, as it helps resolve the tension between speed and control: low-risk AI systems can be approved without delay, while governance and oversight resources are concentrated on the most critical systems.

Fig. 4. Example of a company's internal risk classification, beyond regulation.

|

Inventory and traceability

Organizations are required by regulation to maintain a single record of all AI systems deployed, including their risk classification and lifecycle status. This inventory must be complete, up-to-date and accessible to supervisors, auditors and risk functions.

Most organizations converge towards expanding the Model Risk inventory, based on the logic that AI systems require validation when they carry material model risk. Alternatively, some organizations expand the inventory of technology assets.

Corporate governance of AI is maturing at an accelerated pace. The next few years will see a consolidation toward best practices, but the pace of technological evolution is such that it will likely continue to strain current organizational structures, which were designed for slower paradigms.

Industrialization of AI (MLOps, LLMOps)

From experimentation to production

At present, the main bottleneck in the actual adoption of AI is not algorithmic, but operational. For years, organizations of all types have developed promising AI systems in experimental environments that never made it to production, or that, once deployed, failed when confronted with real data, lost performance over time, or generated unmanageable costs and risks. The industrialization of AI arose precisely to close this gap between experimentation and sustained use in production environments.

Machine Learning Operations (MLOps) emerged as a structured response to this problem. It can be understood as "a set of standardized processes and technological capabilities to build, deploy and operationalize ML systems quickly and reliably". MLOps articulates processes and technical capabilities to manage data preparation, experimentation, training, validation, deployment, monitoring and continuous retraining of models in an integrated manner. The goal is not only to accelerate production deployment, but to ensure reliability, reproducibility, risk control and operational sustainability over time.

The advent of generative AI extends this challenge significantly. Large Language Model Operations (LLMOps) does not replace MLOps, but extends it to handle systems with radically different properties . Large language models introduce nondeterministic behavior, critical dependence on prompt formulation, opaque internal architectures with billions or trillions of parameters, and new risk vectors such as hallucinations, untruthful content generation, or targeted attacks via input manipulation. These complexities are intensified in agentic systems, where a single user interaction can trigger chains of reasoning, multiple internal model calls and autonomous execution of external tools.

MLOps/LLMOps Lifecycle Architecture

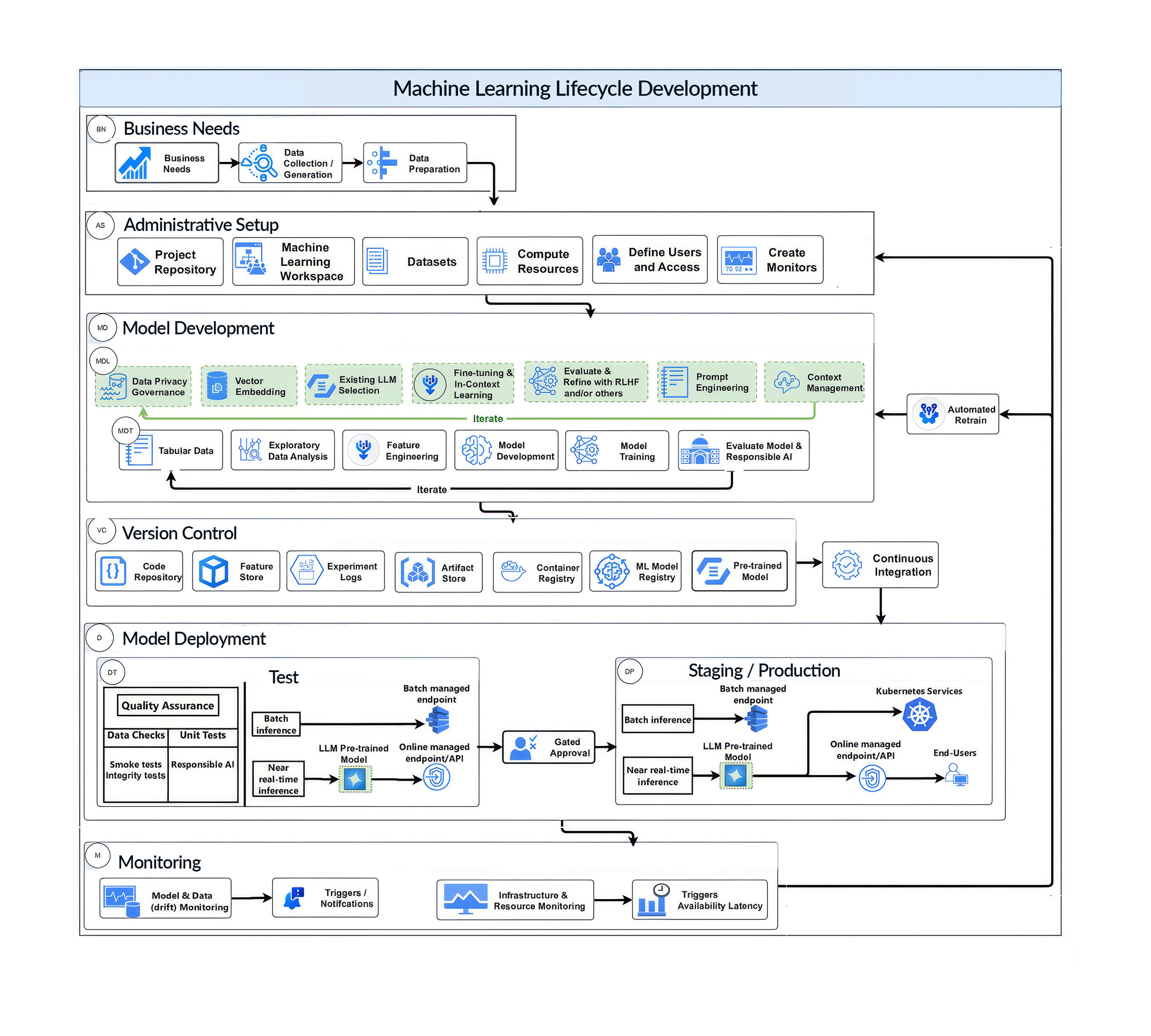

Effective industrialization of AI requires a holistic view of the operational lifecycle, which different authors express in different ways (Fig. 5), but with common elements:

In the data preparation phase, MLOps focuses on building robust pipelines for ingesting, cleansing, transforming and versioning structured data, ensuring traceability and quality. LLMOps extends this scope by working with large volumes of unstructured data (text, code, documents or images) and vector representations used in retrieval-augmented generation (RAG) architectures. This is in addition to strict requirements for personal data minimization, source control and compliance with privacy frameworks such as GDPR.

During experimentation and development, MLOps provides mechanisms to track experiments, compare models, manage hyperparameters and ensure reproducibility of results. In LLMOps, this phase incorporates new dimensions: versioned prompts management, evaluation of different configurations of foundational models, fine-tuning or adaptation using techniques such as LoRA, and the design of metrics that capture qualitative aspects of generation, such as consistency, factuality, security or contextual appropriateness. These metrics do not replace traditional quantitative metrics, but complement them where performance can no longer be measured by precision or error alone.

Validation is one of the major points of divergence between the two approaches. While in MLOps validation relies on automated tests on reference data sets to assess accuracy, bias and robustness, in LLMOps it is essential to integrate human validation into the loop. The evaluation of generative outputs requires expert review, fact-checking techniques against trusted sources, semantic stress testing and red-teaming exercises designed to identify undesirable behavior or exploitable vulnerabilities. Validation is no longer a one-time event but a continuous process.

In the deployment phase, MLOps relies on relatively mature CI/CD pipelines, with well-defined versions of models and dependencies. LLMOps, on the other hand, must handle large-scale model deployments with significant infrastructure, latency and cost implications. This includes dynamic model selection based on use case, risk level or budget, as well as orchestration of complex systems where multiple models and agents interact with each other in a coordinated fashion.

Monitoring is critical in both paradigms, but with different emphases. MLOps focuses on detecting data or concept drift, performance degradation, bugs and latency issues. LLMOps adds the need to monitor costs per token in real time (a key determinant of economic viability), identify patterns of unanticipated usage (prompt drift), and maintain full traceability of interactions for forensic analysis, auditing and regulatory oversight. Without this visibility, generative systems can quickly escalate in complexity and cost without effective control.

Finally, governance and regulatory compliance cut across the entire lifecycle. In MLOps, this involves documenting the lineage of data and models, defining clear responsibilities and ensuring quality controls. In LLMOps, these requirements are extended to respond to emerging regulatory frameworks such as the AI Act, including inventories of systems classified by risk level, human oversight mechanisms, and explainability strategies tailored to models that function as black boxes.

Fig. 5. Example phases of MLOps and LLMOps. Source: Stone (2025).

|

Integration with governance frameworks

MLOps and LLMOps are not purely technical disciplines nor can they operate in isolation. They are the operational layer that embodies the principles defined in broader governance frameworks. The NIST Risk Management Framework structures AI risk management as a continuous process that encompasses governance, contextualization, measurement and management throughout the system lifecycle. Complementarily, ISO/IEC 42001 defines the requirements of an AI management system, including policies, impact assessments, supplier control and continuous monitoring.

Automated MLOps and LLMOps processes (data pipelines, systematic validations, controlled deployments and ongoing monitoring) are the concrete mechanisms that enable these governance frameworks to move from theory to practice. Without a solid operational foundation, AI governance is reduced to aspirational documentation; without clear governance frameworks, technical industrialization lacks criteria for quality, accountability and risk control.

The mature adoption of AI therefore requires a real convergence between engineering, operations and governance. Industrialization of AI is not just about scaling models, but about building reliable, auditable and sustainable systems that can be responsibly integrated into critical business processes. MLOps and LLMOps are evolving best‑practice frameworks, not finished solutions: if bringing AI into production were already a solved problem, we would still be seeing models that silently degrade, incur unexpected costs, or fail to scale. Their value lies in reducing friction and structuring risk management, rather than eliminating risk altogether.

Upskilling, Reskilling and New Professional Roles

The human factor, AI bottleneck

The proliferation of AI is transforming work in more profound ways than suggested by discussions focused solely on task automation or replacement. The main challenge for organizations is not technological, but human: having the right capabilities to design, deploy, operate and govern AI systems in a sustainable way. In this context, talent is no longer understood as a reduced set of experts, but as a distributed organizational competence.

The conclusions in this section are supported by a comprehensive empirical analysis conducted by Management Solutions on a set of 16 large organizations in Europe and the United States, based on real market data and organizational evidence.

Convergence toward a stable core of AI roles

As AI matures, organizations are converging toward a relatively stable set of professional roles. Although the nomenclature varies across industries and companies, the functions performed by these profiles are beginning to become homogeneous. However, there are fewer formal roles than underlying skill sets: in practice, a single professional often brings together several of these capabilities, and the more unusual combinations (someone with expertise in LLMOps, regulation, and prompt engineering, for example) are both the most valuable and the scarcest. This convergence reflects an operational reality: the AI lifecycle (from data to production to control) requires differentiated capabilities that cannot be concentrated in a single type of professional.

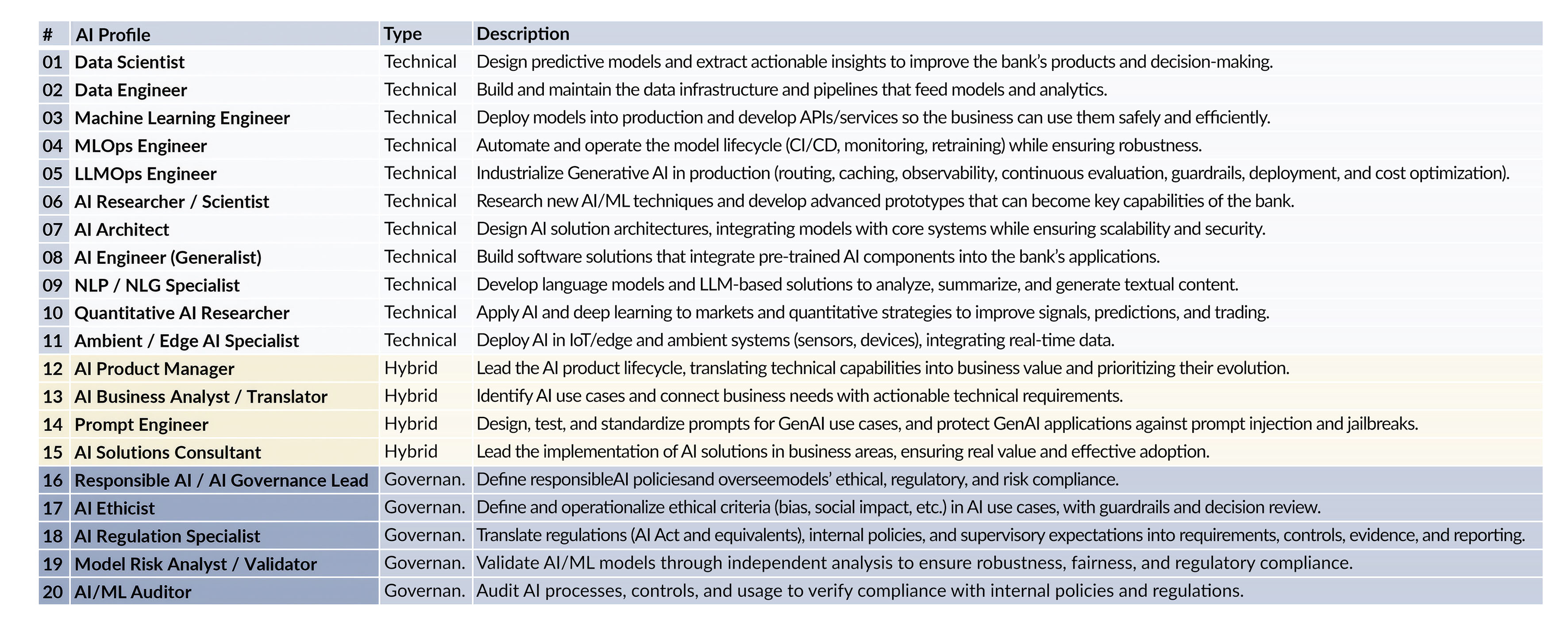

In summary, these professional competencies and capabilities can be grouped into three major blocks (Fig. 6). First, technical profiles, responsible for designing models, building data infrastructures and bringing solutions to production. Secondly, hybrid profiles, which connect technical capabilities with business needs and ensure that AI systems generate real value and are adopted. Finally, governance and control profiles, in charge of risk management, compliance, auditing and responsible use of AI.

This competency structure forms the backbone on which the AI capabilities of organizations are built, regardless of the sector in which they operate.

Fig. 6. Consolidated and emerging AI profiles.

|

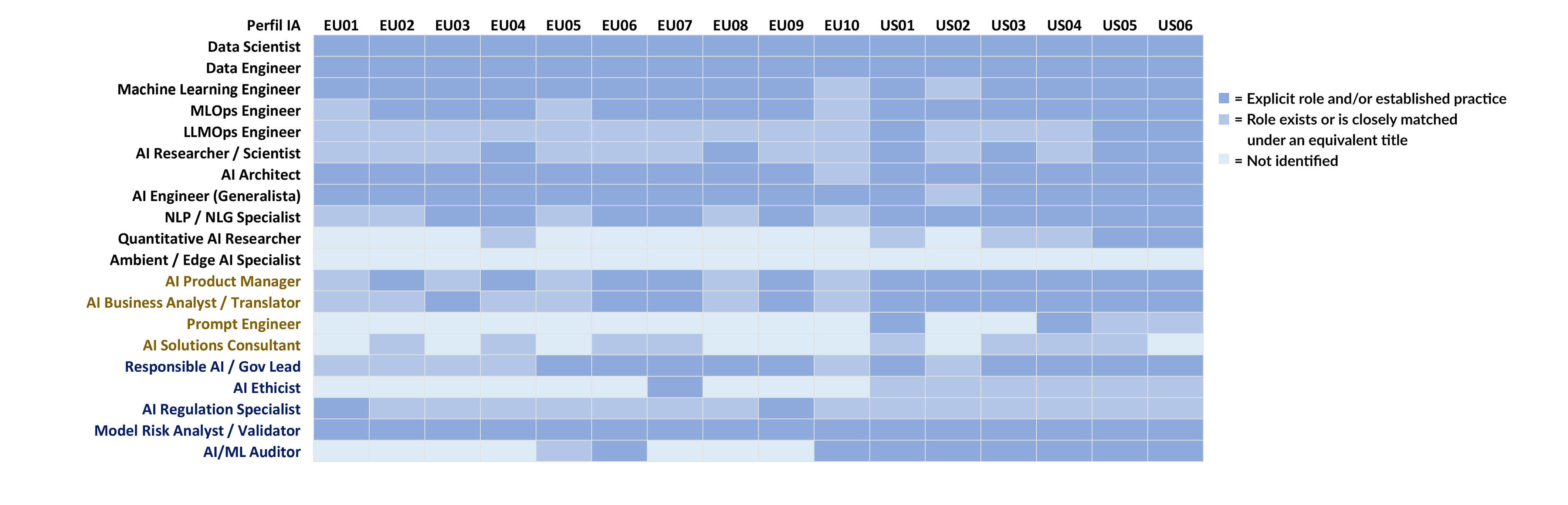

Uneven maturity in the adoption of specialized roles

Although the basic core of AI roles is widely deployed in advanced organizations (with significant heterogeneity), the adoption of emerging or more specialized profiles shows very uneven maturity. The differences lie less in the presence of general capabilities and more in the institutionalization of advanced functions related to the operation of generative and agentic AI, large-scale architecture or the specific governance of these systems.

This heterogeneity is especially visible in emerging roles linked to LLMOps, continuous evaluation of generative models, prompts security or AI governance. In some cases, these functions exist as formal roles; in others, they are informally integrated into more traditional teams, with mixed results in terms of control, scalability and cost.

The heat map (Fig. 7) illustrates that differences between organizations are reflected in which roles they have consolidated and the level of specialization and autonomy of those roles.

Fig. 7. Heat map of AI role adoption.

|

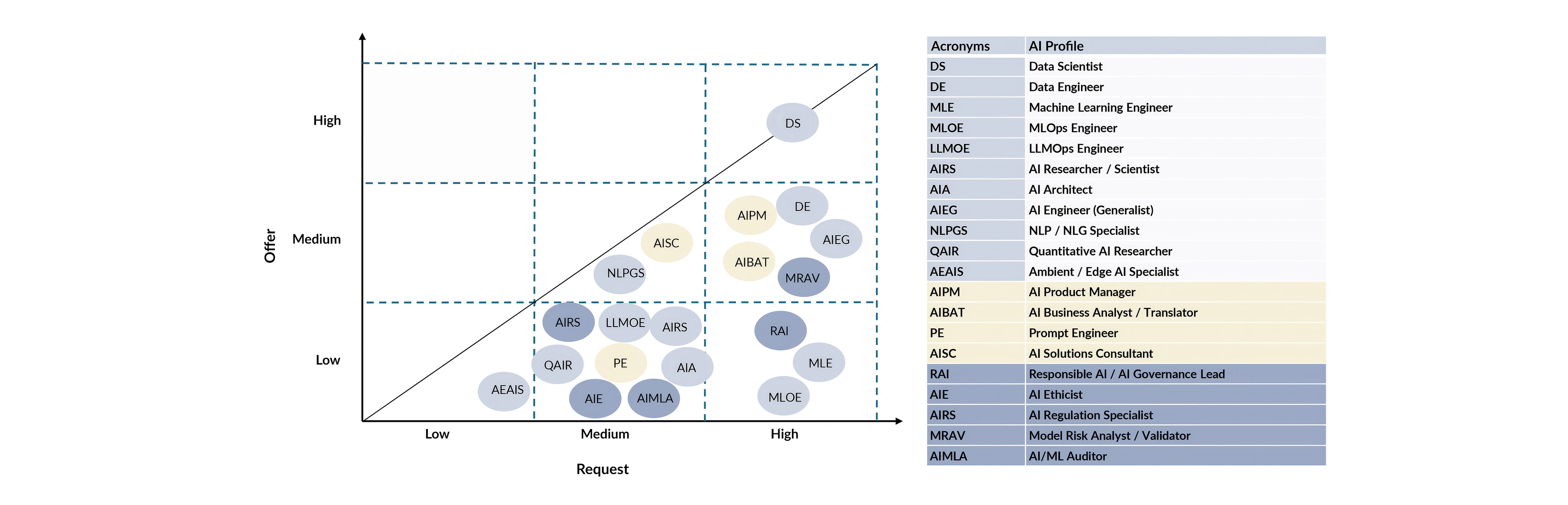

The real bottleneck: supply-demand imbalances

The supply and demand analysis (Fig. 8) shows a generalized structural imbalance in the AI talent market. Demand is high in practically all the profiles analyzed, while supply is systematically insufficient to absorb it. This is not a one-off or concentrated shortage, but a cross-cutting phenomenon that affects most of the AI professional ecosystem.

The only partial exception is the Data Scientist profile, which shows a situation closer to relative equilibrium. This behavior responds to its greater historical maturity, the existence of consolidated training trajectories and a larger pool of professionals with accumulated experience. However, even in this case, demand pressure remains high for senior or specialized positions.

For the other profiles, particularly those focused on production (Machine Learning Engineering, MLOps, LLMOps) and governance, risk, and control functions, the gap between demand and supply persists. The combination of technical complexity, required seniority, and increasing regulatory requirements limits the market’s ability to generate talent at the necessary pace. As a result, outsourcing alone cannot close the gap, making internal upskilling and reskilling a structural lever to scale AI with impact and control.

Fig. 8. Supply-demand matrix of AI profiles

|

Strategic implications

The implications of this analysis are clear. An AI talent strategy cannot rely solely on attracting scarce profiles from the market. Internal upskilling and reskilling become strategic levers, especially for critical roles where external supply is structurally insufficient.

Likewise, operational and governance profiles are as decisive as modeling or research roles. Competitive advantage lies not only in developing advanced models but also in integrating them securely, efficiently, and compliantly into the organization’s actual processes.

Ultimately, the AI-driven transformation is not just technological. It is a transformation of work, professional roles and collective capabilities. Organizations that understand this human dimension and address it in a structured way will be better positioned to capture the value of AI in a sustained manner.

AI and Industry Transformation (AI + X)

AI as a cross-cutting layer

AI is no longer a technology applied incrementally in specific sectors, but a cross-cutting layer of intelligence that is simultaneously integrated into multiple economic and social domains. This transition, widely documented by international organizations, is characterized by a move from isolated pilots to structural adoption affecting entire processes, value chains and operating models. Not all progress follows a cross‑cutting logic: some of the most significant advances are driven by specific sectors (such as medical AI, defense, or regulated financial services) where domain specificity, the nature of the data, or the regulatory environment create highly specialized capabilities that are difficult to transfer to other contexts without substantial adaptation.

From a comparative perspective, evidence shows that differences between sectors are no longer explained by the mere presence of AI, but by the intensity and depth of its integration. The OECD classifies sectors according to their "AI intensity" based on factors such as task composition, data availability and human capital, showing that even traditionally low-digitized sectors are rapidly increasing their exposure to AI. This adoption is occurring in parallel across sectors, generating cross-acceleration effects and cross-sector learning.

In macroeconomic and labor terms, the impact is systemic. The International Monetary Fund estimates that about 40% of global employment is exposed to AI, with higher percentages in advanced economies, where the complementarity between AI and skilled labor is greater. The International Labor Organization qualifies that generative AI tends to automate specific tasks rather than entire occupations, reinforcing the need for organizational adaptation and continuous training.

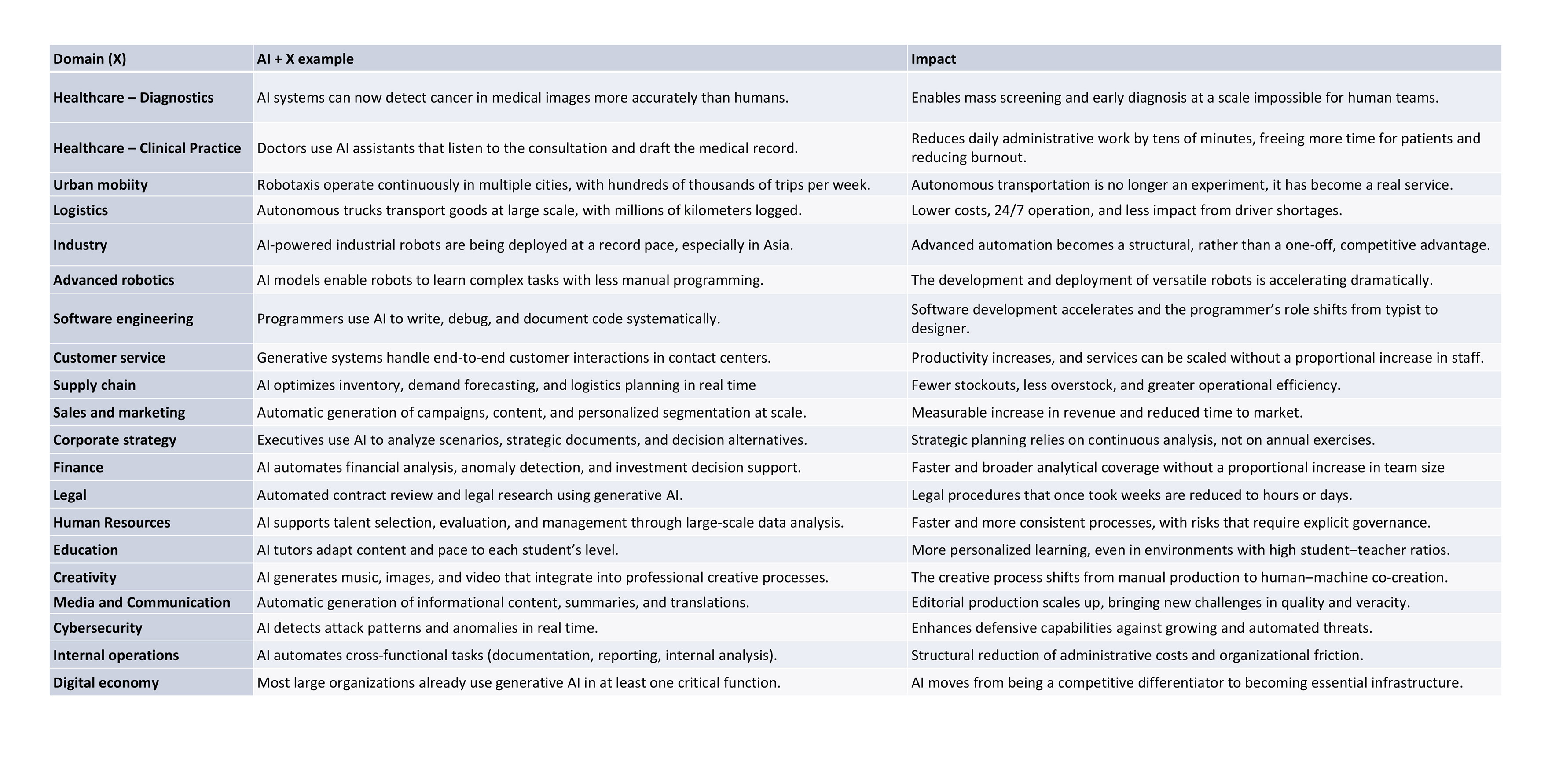

In this context, the AI + X concept is useful to describe a structural pattern: AI is applied within specific industries or professional fields, adapting to their unique tasks and processes, while still retaining its broader capabilities as a general-purpose technology. It is not merely a collection of separate use cases, but a coordinated, cross-cutting integration, supported by recent empirical evidence . This section presents some illustrative examples, without claiming to be exhaustive (Fig. 9).

AI + Health: clinical accuracy and scalability

In healthcare, AI is being integrated into clinical diagnostics, medical decision support, patient monitoring and administrative automation. The World Health Organization documents a steady growth in the use of AI systems in radiology, pathology and primary care, highlighting both their clinical potential and associated risks.

Multiple studies demonstrate that AI systems achieve accuracy levels comparable or superior to human specialists in tasks such as cancer detection in medical imaging or dermatological classification. These results do not imply direct replacement, but a significant expansion of diagnostic and screening capacity on a large scale. This advance, WHO stresses, requires strong governance frameworks, clinical validation and human oversight, given its direct impact on people.

AI + Education: personalization at scale

In education, generative AI is transforming content creation, assessment and learning support. UNESCO identifies the growing use of adaptive tutors, automatic generation of learning materials and continuous formative assessment systems, with the potential to improve personalization and reduce educational gaps.

Education is one of the sectors where AI can have structural impacts in the medium term, by enabling more flexible and adaptive learning models. However, these benefits critically depend on public policies and institutional frameworks that preserve equity, academic integrity and the role of teachers.

AI + Work: reconfiguring tasks

AI is redefining work in a cross-cutting way, affecting occupations in virtually every sector. The IMF estimates that exposure to AI reaches approximately 60% of employment in advanced economies, compared to lower percentages in emerging economies, reflecting differences in productive structure and human capital.

The ILO concludes that, for now, generative AI has a greater impact on specific cognitive tasks than on entire jobs, implying a reconfiguration of job content rather than a massive substitution. This evidence reinforces the need for upskilling and reskilling strategies, as discussed above.

AI + Industry: Productivity and Efficiency

In industrial and operational environments, AI is applied to predictive maintenance, process optimization, planning and advanced robotics. Many organizations have already moved from pilot testing to full-scale deployments, integrating AI into critical production and logistics processes.

There is already strong evidence of significant efficiency improvements and reduced failures in industrial systems where AI is systematically integrated, although the greatest benefits are seen when the technology is accompanied by organizational and process changes.

AI + Finance

In information-intensive sectors, such as finance and professional services, AI is used for fraud detection, risk management, service personalization and document automation. A sustained expansion of AI use in these areas can be observed , with relevant differences depending on the regulatory framework and organizational capacity

The adoption of generative AI in these sectors is associated with productivity improvements and the emergence of new service models, although it is noted that the benefits depend on data quality, governance and integration into existing processes.

AI + Creativity: new cultural models

AI-generated text, images, music, and video are transforming the creative and media industries. In recent years, we have seen rapid growth in these applications and a substantial increase in their commercial and cultural use.

This development raises relevant regulatory and economic debates, especially around intellectual property and business models, which are being unevenly addressed by different regulatory frameworks.

Summary: from sectors to cross-cutting infrastructure

Beyond sector-specific examples, the evidence points to a central insight: the current transformation stems not from isolated AI adoption in individual sectors, but from its simultaneous, cross-cutting integration as operational and cognitive infrastructure. Studies agree that competitive advantage is no longer achieved by applying AI to individual functions, but by integrating it coherently along the entire value chain.

In this sense, AI+X no longer describes a terminological fad, but a structural pattern of transformation. Understanding this logic is essential for anticipating sectoral impacts, designing appropriate public policies and defining organizational strategies that can sustainably capture the value of AI.

Fig. 9. Representative examples of AI + X, prioritizing cases where AI has moved from pilot to measurable operational use. Source: adapted from Stanford (2025).

|

AI in Personal and Everyday Life

The paradigm shift: people first, then organizations.

Generative AI has staged a radical reversal of the traditional technology paradigm. Unlike previous enterprise technologies (cloud computing, ERP, CRM) that were born in corporate environments and gradually filtered down to personal consumption, generative AI first burst into people's daily lives and only later was it formally adopted by organizations.

The data is compelling: ChatGPT reached approximately 900 million weekly active users by the end of 2025, as estimates predicted. This figure places generative AI among the fastest adopting technologies in history, surpassing the penetration rate of mobile internet, social networks or streaming services.

More tellingly, the proportion of personal use not only precedes, but consistently exceeds professional use. In the European Union, 25.1% of the population uses generative AI tools for personal purposes, compared to 15.1% who use them in work contexts and just 9.4% in formal education. In OECD countries , more than a third of individuals report regular use of generative AI, with students leading adoption: three-quarters of students over the age of 16 use these tools, while 41.1% of employees integrate them into their work, often informally even before their organizations authorize it.

This time sequence is not anecdotal: it redefines the logic of digital transformation. Organizations are not leading AI adoption; they are responding to capabilities that their employees, customers and suppliers already possess and use unofficially.

The phenomenon of shadow AI (unauthorized use of AI tools in corporate environments) is not a transitory anomaly, but a structural consequence of this investment: people acquired augmented cognitive competencies in their personal lives that they then spontaneously transferred to their jobs, creating risk exposures that most companies still do not control.

Magnitude, distribution and fractures: who uses AI and for what purpose

The adoption of AI in personal life spans a broad spectrum of activities. Dominant uses include content creation (text, images, music and videos), support for learning and conceptual exploration, automation of routine tasks, conversational companionship, and access to information: in the United States, 10% of adults use AI chatbots to get news, introducing a new vector of information mediation with profound implications for public debate.

However, this democratization is radically asymmetric. The geographical divisions are extreme: in Norway, 56% of the population uses generative AI tools; in Denmark, 48.4%; in Estonia, 46.6%. In contrast, in Turkey the figure drops to 17%; in Romania, 17.8%. Even within developed economies, the differences are substantial: Germany stands at 32%, France at 37%, Spain at 38%, while Italy remains below 20%.

The generation gap is even more pronounced. The difference in adoption between younger and older age groups reaches 53.6 percentage points, making it the most decisive segmentation factor, ahead of education (21 percentage points gap) or income level (21 points). While 75% of students use generative AI regularly, only 12.5% of retired or economically inactive people report having used it. The gender gap, in contrast, is comparatively smaller: 4.2 percentage points.

The paradox of mass adoption

Globally, 66% of the population believes that AI-based products and services will significantly impact their daily lives in the next three to five years, an increase of six percentage points since 2022. However, this recognition of the impending impact coexists with growing ambivalence.

The general public shows a high level of concern: in the United States, 51% of adults say they are more concerned than excited about the increased use of AI in everyday life, compared to only 11% who express greater enthusiasm. Among AI experts, the ratio is reversed: 47% are more excited than concerned, and only 15% more worried. This 40 percentage point divergence between experts and the public reflects not only differences in technical knowledge, but structurally different perceptions of the risk-benefit balance.

Familiarity with AI does not automatically lead to confidence. Although personal use correlates positively with perceptions of AI as an opportunity, it also increases awareness of its risks. Confidence that AI companies adequately protect personal data ranges from 50% in 2023 to 47% in 2024. Simultaneously, the proportion of people who believe that AI systems are unbiased and free of discrimination decreased.

The context of use is absolutely critical. Research in the UK shows swings of up to 110 percentage points in public acceptance depending on the specific application: from a net balance of +53% comfortable with AI analyzing traffic data, to -57% opposing AI used to analyze political preferences and direct personalized advertising.

There is, however, a cross-cutting consensus between experts and the general public: both groups want more control over how AI is used in their lives (55% of the public and 57% of the experts), and both feel they do not currently have that control (less than 25% in both cases). This gap between demand for agency and perceived powerlessness represents one of the most pressing democratic governance challenges of AI.

Inequality of access and risk of exclusion

The asymmetry in the adoption of AI in personal life is not a conventional digital divide. It is not merely a matter of access to technological infrastructure (internet connection, devices), but of differentiated access to increased intellectual capacity. Those who integrate AI as an everyday cognitive tool gain cumulative advantages in learning, productivity, creativity and access to information. Those who do not face an increasing structural disadvantage.

Digitally disconnected groups perceive AI in a markedly negative way: 51% anticipate a negative personal impact, compared to only 17% who expect benefits. This perception is not irrational: it reflects the intuition that the ongoing transformation may redistribute opportunities in profoundly unequal ways.

AI is already transforming the daily lives of approximately one billion people. The strategic question is no longer whether this transformation will continue, but whether societies will build frameworks that turn AI into inclusive public infrastructure or allow it to consolidate as a vector of social fragmentation that is difficult to reverse.

AI, Sustainability and Social Impact

Sustainability enters the Algorithmic Age

The sustainable transition is no longer exclusively a question of physical infrastructures (new renewable plants, power grids, or electrification of transportation) but also a challenge of systemic coordination. Today's energy systems combine distributed generation, renewable intermittency, storage, dynamic markets and flexible demand. This complexity cannot be managed by static rules or linear planning alone.

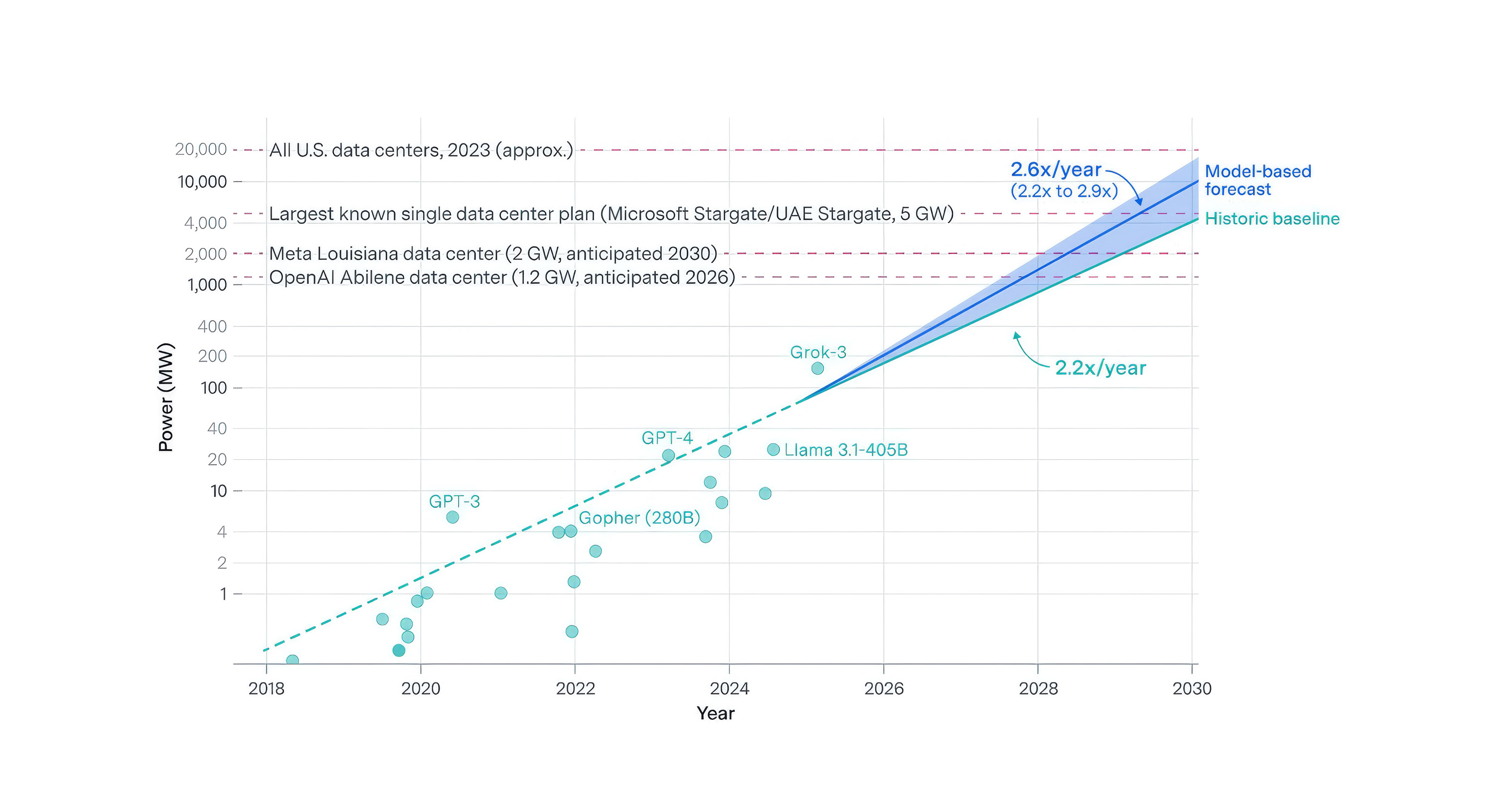

In this context, AI emerges as a cognitive infrastructure capable of modeling interdependencies, simulating scenarios and optimizing decisions in real time. Accelerated electrification and structural growth in electricity demand are reshaping capacity, flexibility and network planning needs in the coming years: in 2024, data centers consumed 1.5% of global electricity, the power required to train boundary models is growing at a rate of 2.2x to 2.9x per year (Fig. 10), and the largest runs already exceed 100 MW of instantaneous power. Sustainability is thus entering a phase in which computational capacity becomes an integral component of the energy and climate system.

The shift is conceptual: from decisions based on historical averages to decisions based on dynamic simulations and high-resolution probabilistic analysis. AI does not replace engineering or physics; rather, it amplifies the capacity for anticipation and coordination in systems with multiple variables and simultaneous constraints.

Fig. 10. Frontier AI energy demand growth. Source: Epoch (2025b).

|

Optimizing the planet: efficiency, resilience and adaptation

In operational terms, AI is acting as a transition accelerator. In power grids, predictive models make it possible to anticipate demand peaks, optimize dispatch, reduce congestion and improve the integration of variable renewables. Without AI, the optimal integration of renewables and real-time management would be more uncertain. In an environment where electricity plays a central role in decarbonization, operational efficiency ceases to be marginal and becomes a structural condition.

The relationship between energy and AI is also bidirectional: AI can significantly improve energy efficiency, grid planning and complex system management, and reduce emissions on the order of 1,400 Mt CO2eq per year by 2035 in wide adoption scenarios; however, that reduction potential coexists with a structural increase in the energy consumption of AI’s own infrastructure which, in the absence of a parallel transition to clean energy, may result in a net neutral -or even negative- emissions balance. The strategically relevant question is not whether AI can contribute to decarbonization -it can- but under what energy and governance conditions that potential can materialize without being offset by its own infrastructural footprint.

Beyond the power system, AI improves resilience in the face of increasing physical risks. Climate modeling supported by machine learning increases the spatial and temporal granularity of predictions, facilitating decisions in urban planning, insurance and critical infrastructure. In agriculture and water management, data-driven optimization reduces waste and adjusts input under environmental constraints.

These developments fit into a broader global agenda. Progress towards the Sustainable Development Goals is advancing against a backdrop of energy, climate and demographic stresses. In this scenario, AI can act as a cross-cutting catalyst: not as a substitute for public policies, but as a tool that improves efficiency, reduces friction and enables more precise allocations of scarce resources.

The hidden cost: energy, materials and infrastructure concentration

The same digital infrastructure that enables these improvements generates new pressures. The growth of data centers and computational loads associated with AI is contributing to rising electricity demand in certain advanced economies:

- The IEA projects that global data center electricity consumption will increase to 945 TWh per year by 2030, equivalent to Japan's electricity consumption today, with AI as the main driver of that expansion.

- The largest individual runs of frontier model training could require 4-16 GW of power by 2030, equivalent to the output of several nuclear power plants and the electricity consumption of millions of homes.

- The power required to train frontier models has been growing by more than 2x per year, driven by compute growth of 4-5x per year, partially offset by hardware energy efficiency improvements (ca. 40% per year on leading GPUs).

Therefore, massive deployment of advanced models may become a structural driver of power consumption growth if not accompanied by efficiency improvements and energy planning.

The sustainability of AI cannot be assessed solely by the benefits it produces, but also by the resources it consumes: in baseline scenarios, CO2 emissions from data center electricity could reach 300-320 Mt CO2 per year by 2030 if additional electricity continues to rely heavily on fossil fuels. Training and deployment of large-scale models require power, water for cooling (in some regions, in direct competition with agricultural or household use), and critical material-intensive hardware. In addition, the geographic location of data centers introduces asymmetries: the carbon intensity of electricity varies substantially between regions, implying differentiated environmental footprints for the same digital service.

This infrastructural dimension adds a geopolitical layer. The concentration of computational capacity in certain countries or regions reconfigures strategic dependencies and access to technology. AI is not just an environmental tool; it is also a critical node on the global energy and technology map.

The question is not whether AI "offsets" its footprint, but how it is designed and deployed to maximize energy efficiency per unit of cognitive capacity generated. The sustainability of AI thus becomes an architectural and governance issue.

Sustainability without inclusion is not sustainable

The environmental dimension does not exhaust the analysis. It is important to distinguish between the socially just energy transition, linked to changes in production and energy systems, and the just transition associated with AI deployment itself. The latter introduces specific distributive effects: the ability to integrate advanced technologies depends on human capital, digital infrastructure and institutional quality. Economies with higher technological density tend to capture productivity and efficiency benefits earlier, potentially widening gaps with less prepared regions.

From the perspective of human development, technology can expand capabilities (education, access to services, economic participation, etc.) or consolidate existing exclusions, depending on the context of adoption and the degree of democratization of access to its tools . The extension of digital capabilities to SMEs, entrepreneurs and groups with less technological capital is an important mechanism for matching the pace of creation with the capacity to adjust. In the labor sphere, the convergence between green transition and intelligent automation can accelerate processes of sectoral reallocation and skills transformation, with displacement potentially occurring before new opportunities arise. Managing this time lag requires strategic planning, active training policies and mechanisms to help ensure productivity gains are widely shared.

From a structural perspective, environmental sustainability requires a socially just energy transition that avoids concentrating the costs of change in certain territories, sectors or groups. This challenge is conceptually independent of the use of AI. At the same time, AI deployment introduces its own just transition: productivity and efficiency gains often materialize unevenly and with a time lag relative to initial negative impacts, particularly on employment and certain skills. Therefore, AI applied to sustainability must be evaluated not only for its aggregate impact, but also for how its benefits are distributed and for the public and private strategies that broaden access and mitigate the social costs of adjustment.

Sustainable AI by design: from ambition to discipline

The convergence of these dimensions (systemic optimization, infrastructural pressure and distributional effects) compels a shift in the debate from general principles to verifiable practices. AI aligned with sustainability goals requires explicit metrics on energy consumption and associated emissions, transparency on architecture and deployment location, and assessment of social impacts from automated decisions.

The SDG framework reinforces this need for structural coherence between technology and sustainable development. In turn, the interrelationship between energy and digitalization requires that energy considerations be integrated into corporate and public technology planning.

In summary, AI occupies an ambivalent position in the sustainable transition: it can enable emission reductions on the order of gigatons, yet the training of its most advanced models could reach 4-16 GW per run by 2030, placing AI on the same energy consumption scale as large industrial infrastructures.

AI is simultaneously an accelerator of systemic efficiency, a new infrastructural burden and a distributive force with differentiated social effects. The question is not whether AI will be part of the sustainable transition, but under what energy and social conditions it will support a sustainable transition.

AI Ethics and Philosophy

An open problem after more than 200 frameworks

The ILO has catalogued 245 AI ethics frameworks, codes and recommendations issued since 2017 by governments, international bodies, companies and civil society; a previous academic meta-analysis identified at least 17 recurring principles in 200 of them: transparency, fairness, accountability, privacy..., there is a baseline normative consensus. Yet the ethical challenges associated with AI have not diminished with the proliferation of these frameworks, but have grown in complexity, raising questions for which no existing framework provides guidance.

The gap between principle formulation and translation into concrete decisions is not merely a technical problem: it is the central challenge of AI governance.

From principle to action: how to operationalize AI ethics

The gap between a values document and the actual behavior of an AI system is where the operational risk lies. This phenomenon, known as "ethics washing," does not always stem from deliberate intent: it often reflects the genuine difficulty of translating an abstract principle ("the system must be equitable") into concrete design criteria, audit procedures, or organizational accountability lines. Addressing this gap shifting the approach to ethics from declarative to operational.

A well-documented and widely cited example of operationalization in the financial sector is De Volksbank, a Dutch bank whose AI ethics governance has been analyzed in detail. Its structure includes an AI Ethics unit integrated within the Compliance function, staffed with both philosophical and technical background profiles, which evaluates each AI system individually before deployment: ethical impact analysis, bias screening, decision traceability and formalized escalation channels. The case illustrates that effective operationalization lies not in the scope of the stated principles, but in the robustness of the processes, roles and decision points that bring them to life.

Synthesizing the state of the art, an operational AI ethics framework in a large organization typically incorporates six components:

1. Governance structure: definition of decision-making roles, review responsibilities, and lines of defense, with explicit documentation of accountabilities.

2. System impact assessment: specific analysis for each use case, proportional to the system’s level of autonomy and the consequences of the decisions it makes.

3. Continuous management of biases: detection and correction mechanisms that operate on a permanent basis, not as one-off audits.

4. Transparency and audience-specific explainability: the information requirements of regulators, clients and employees affected by automated decisions are substantially different.

5. Escalation and complaint mechanisms: accessible and secure channels for reporting unexpected system behavior.

6. Periodic review of the framework itself: regular reassessment of the AI ethics framework, since model updates may change the risk profile of deployed systems; evaluation is ongoing, not limited to initial deployment.

A notable recent example of putting AI ethics into practice goes beyond the organizational policy level and integrates ethical principles directly at the model training stage. The Constitution published by Anthropic in January 2026 is the first public document from a frontier laboratory that encodes values directly into the training process, with an explicit and reasoned hierarchy between security, ethics, compliance, and utility. The conceptual shift is significant: rather than imposing rules externally, the system is designed to internalize the reasoning behind each principle, enabling it to generalize that judgment to unanticipated situation.

However, efforts to operationalize AI ethics face a fundamental challenge: current frameworks often fail to specify what type of entity is being governed.

What are we creating? The question that the frameworks do not answer

Current regulatory frameworks, including the European Union's AI Act, classify AI systems by level of risk and domain of application. This classification is operationally useful and provides some ethical cover (in the sense of protecting against potential abuse of AI), but it does not distinguish between the different types of relationship a system establishes with humans. A credit scoring system, a conversational assistant, and an autonomous agent negotiating contracts on behalf of an organization can overlap in their regulatory risk category and yet operate on fundamentally different ethical grounds. A system that adapts its behavior to the speaker, maintains consistency over time, and produces contextually indistinguishable responses from those of a person with genuine understanding raises questions that conventional regulatory frameworks are not equipped to address.

These questions fall into four categories, all of which are actionable for organizations deploying AI systems:

- Ontology: What kind of entity is this system, and what categories are relevant for describing and governing it?

- Epistemology: How does the system verify the information it provides, and how can humans validate the reliability of its outputs?

- Theory of mind: Does the system possess any form of internal “experience” (even rudimentary), and what implications does this have for those who design and deploy it?

- Applied ethics: What obligations does the system create for the organization that uses it, beyond what current regulations explicitly require?

These are not speculative questions. In January 2026, Anthropic publicly acknowledged that its Claude model "may possess some form of consciousness or moral status," becoming the first frontier laboratory to make this claim public. The significance of this acknowledgment lies not only in what it states, but in what it reveals: that a top-tier company admits it cannot answer with certainty the question of what it has created.

All decisions about how to use, audit and regulate an AI system implicitly incorporate an answer to that question. In most organizations, that answer occurs by default, without explicit deliberation.

Four fractures: unanswered questions

The expansion of AI not only amplifies known ethical dilemmas: it also creates conceptual fractures for which current legal and ethical frameworks provide no structured answers.

The first is the epistemic crisis of verification. AlphaFold has predicted the structure of more than 200 million proteins, and more than three million researchers in 190 countries use them as the basis for their work. A significant portion of these structures cannot be verified experimentally with conventional scientific methods, yet they are used to design drugs and guide clinical decisions. The underlying question is not whether the system works (it does so with an unprecedented level of accuracy), but what ethical and regulatory protocols apply to a scenario where scientifically operational knowledge becomes computationally inaccessible to human verification.

The second is the asymmetry of liability in emerging damages. Legacy ethical and legal frameworks assume identifiable actors with discernible intentions. AI systems, however, can produce harms without direct deliberate intent and with causation distributed among multiple actors (designers, trainers, deployers and users) creating what the literature calls the 'many hands problem': situations in which moral and legal responsibility is structurally diluted as the causal chain fragments. Current liability frameworks and compliance regimes have no structured response to this type of diffuse causation.

The third is the representation of people unborn yet. AI systems are trained on historical data. Their biases reflect the human past, amplified at scale. No AI ethics framework today incorporates mechanisms that represent the interests of generations that have not yet been born, and thus have not produced data, yet will live with the consequences of systems designed in the present. This issue is particularly relevant for applications with long temporal horizons, from urban planning to climate risk modeling.

The fourth is free will as a systemic risk. An increasing proportion of individual and organizational decisions are de facto mediated by AI systems whose offerings are highly concentrated: three vendors account for 88% of global enterprise spending on language models, and the underlying cloud infrastructure market is similarly concentrated. This concentration implies that biases introduced deliberately or accidentally in one of these models do not affect individual decisions, but millions of simultaneous processes in different organizations, industries and countries. The cognitive diversity of a society (i.e., its ability to reach different conclusions by different paths) depends, in part, on the systems that mediate its thinking being neither homogeneous nor oligopolistic.

These four fractures are not arguments against the adoption of AI, but the conceptual territory that organizations that seriously manage this adoption must learn to inhabit. Their relevance is not diminished by the fact that they are not included in current regulatory frameworks; on the contrary, it is precisely because they are unaddressed that these risks are the least visible and carry the greatest potential for harm.

Table ofcontents

Introduction

Executive Summary

The Technological Explosion of AI

Case Study: GenMS™ Sybil

Conclusions

References & glossary