Trends in AI

AI Risks, Regulation and Safety

Vídeo: Trends in AI

Table of contents

Complete document

Access to GenMS™ Sybil

Generative AI and agentic systems offer transformative capabilities, but introduce structural risks that are no longer hypothetical: they are materializing in production. The Bletchley Declaration explicitly warned of potentially "catastrophic" AI impacts, and accumulated experience confirms that the list of risks is long and diverse. This section discusses the risks of AI, the regulatory frameworks and governance of AI, the battle between defensive and adversarial AI in cybersecurity, and the open tensions in privacy and intellectual property.

AI Risks

AI does not create risk: it amplifies it

The adoption of AI does not introduce fundamentally new risks, though there are some exceptions. Instead, it drastically amplifies existing risks: operational, model, technological, vendor, legal, reputational, compliance, strategic, social, etc. The difference lies not in the nature of the risk, but in its speed of propagation, scale of impact, and difficulty of containment.

Except for a few emerging categories (such as toxic content generation, certain forms of cognitive manipulation through deepfakes, or prompt injection attacks), most of the risks associated with AI are accelerated, automated and massive versions of known problems. An algorithmic bias is, in essence, a human bias systematized and replicated millions of times. An information leak due to misuse of a chatbot is ultimately an information leak.

Four interconnected dimensions

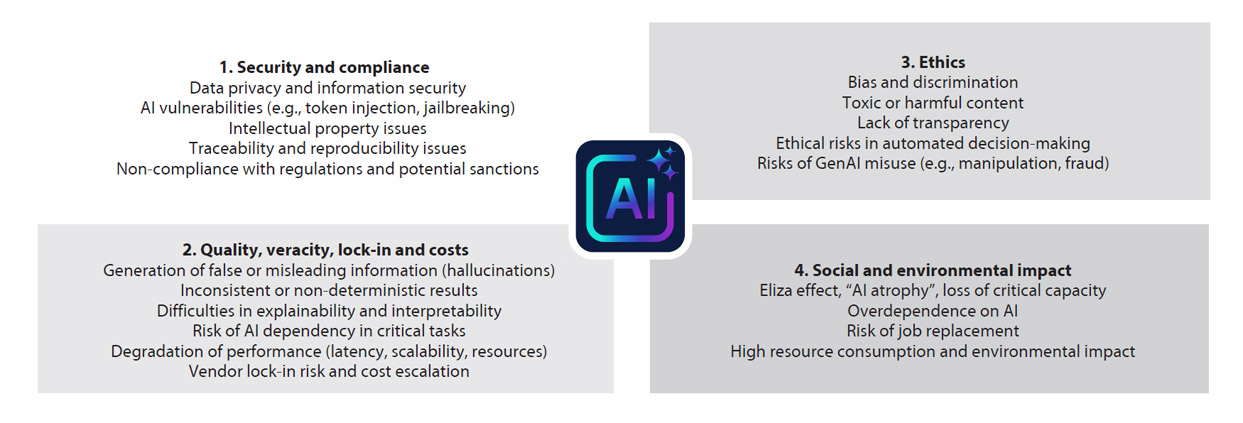

In practice, these risks manifest across four major, interconnected dimensions (Fig. 3):

1. Security and compliance

AI introduces new attack surfaces and complicates regulatory compliance. Privacy and information security face specific threats: unintentional leaks of confidential data from AI systems, emerging technical vulnerabilities such as prompt injection (malicious instructions embedded in inputs that "trick" the model into ignoring restrictions) or jailbreaks (techniques for circumventing security controls), and accidental exposure of sensitive information when professionals use unsecured tools.

Intellectual property becomes ambiguous: who owns the AI-generated code, what happens when a model reproduces copyrighted fragments? As an example, the New York Times has open litigation with OpenAI/Microsoft for using copyrighted content without a license, among many other ongoing lawsuits.

Traceability and reproducibility are degraded when critical decisions rely on models that continually evolve through automatic retraining, making it difficult to reconstruct exactly which version of the system produced which result at which time.

And regulatory non-compliance now has direct and visible financial consequences: the European AI Act provides for fines of up to 35 million euros or 7% of annual global turnover, making AI compliance a material risk of the first order, higher even than data protection.

2. Quality, reliability, lock-in and costs

As already mentioned, Generative AI is intended to produce plausible outputs, not necessarily correct ones. Hallucinations (generation of false information presented with confidence) are not occasional bugs, but behaviors intrinsic to the actual design of the models. Non-deterministic behavior implies that the same input can produce different outputs in the same model, which complicates validation, auditing and certification of critical processes.

Silent model drift represents one of the most insidious risks: a model that was working properly can degrade in a short time because the data distribution changed, without the system generating early warnings. A model can continue to operate with the appearance of normality until someone manually detects anomalies in the results.

Over-reliance on AI in critical tasks creates operational fragility: if the AI system fails, crashes, or becomes prohibitively expensive, can the organization continue to operate? Are there human contingency procedures? And loss of effective human control occurs when decisions are delegated to systems whose internal reasoning is opaque even to those who develop and operate them.

Moreover, organizations build structural dependencies on a few model providers (OpenAI, Anthropic, Google) and infrastructure (AWS, Azure, GCP). Changes in pricing, terms of service or operational outages can cripple critical processes simultaneously. Migrating between ecosystems involves rewriting integrations, retraining workflows, recertifying compliance and assuming prohibitive costs, which is not always feasible; diversifying suppliers, although costly, is the structural response to this risk.

Added to the above risks is an economic dimension that traditional management frameworks underestimate. Unit inference costs are structurally declining (approximately 10x every 12 months), but this decline does not shield against the exponential growth in volume: the agentic systems have cost structures with non-linear scalability: each agent multiplies calls to models and tools, and without real-time token monitoring and explicit spending limits built into the design, a viable prototype can become an unsustainable system before anyone detects it. Added to this is uncertainty about return on investment: there is no definitive answer as to whether heavy investment in AI will produce the expected benefits, and many organizations are moving forward driven by competitive pressure rather than a robust business case. Gartner synthesizes both risks in one prediction: more than 40% of agentic projects will be cancelled by 2027 due to cost escalation, unclear business value, and inadequate risk controls. Lock-in exacerbates the problem structurally: dependence on a single vendor eliminates the ability to migrate to more efficient architectures when they become available.

3. Ethics and Automated Decision Making

Algorithmic biases amplify pre-existing biases in historical data: if a personnel selection model is trained with data from an organization historically biased towards certain profiles, the model will systematize and scale that bias. The lack of transparency and the challenges of explainability make it harder to meet regulatory requirements that expect automated decisions to be understandable and justifiable, especially when they affect fundamental rights.

Automated decisions with direct human impact (credit approval, medical diagnoses, criminal risk assessment, candidate selection) raise liability issues: who is liable when the model makes a mistake with serious consequences: the provider of the base model, the team that customized it, the user who wrote the prompt, the committee that approved its deployment? The chain of responsibility becomes complex, creating accountability gaps that neither regulation nor practice has fully resolved.

4. Social and organizational impact

Job transformation is no longer speculative: routine manual and cognitive tasks face accelerating automation, and organizations must manage job transitions, reskilling programs, and increasing regulatory and social expectations about corporate responsibility.

Cognitive dependence and erosion of critical capabilities represent a major risk: if an entire generation of professionals comes to rely exclusively with AI as an intermediary, will they retain sufficient intuition and knowledge to critically assess outcomes? What happens if AI is not available? The pressure on resources and environmental footprint is significant: training advanced models consumes as much energy as thousands of homes would use over several months, and the continuous, large-scale inference performed by these models further increases energy demand, raising questions about long-term sustainability.

Fig. 3. Some risks of generative AI.

|

Nonlinear amplification

AI introduces a key phenomenon in risk management: nonlinear amplification. A minor glitch (a data bias, a poorly designed prompt, a permission misconfiguration) can escalate in minutes and simultaneously affect processes, customers, regulators and reputation. The greater the autonomy and integration of AI into critical processes, the wider the potential impact of any failure.

A concrete example: a customer service model that, after a minor change in the system prompt, starts to reveal confidential information from other customers in "as few as" 0.01% of conversations, which is almost undetectable in testing. In a system that handles 100,000 interactions per day, this means 10 leaks per day before the pattern is detected. By the time the problem is identified, hundreds of incidents have already occurred, each with regulatory, contractual and reputational implications.

AI in the corporate risk taxonomy

Organizations are taking one of three approaches to integrating AI risk into their corporate risk framework:

- Treat it as a top-level risk, creating a new category in the taxonomy (a very rare approach, but useful for gaining executive visibility in the early stages of mass adoption).

- Treat it as a second level risk, linked to technological, model or operational risks within the existing taxonomy.

- Do not treat it as an independent category, but as a transversal driver that amplifies existing risks (model risk, supplier risk, reputational risk, etc. – amplified by AI).

In practice, the label is less important than the organization's ability to identify, prevent, control and mitigate these risks systematically and continuously, with clear metrics, assigned responsibilities and defined escalation mechanisms.

Strategic implication

AI risk management has become a strategic decision that conditions the speed of adoption, operational viability and institutional credibility of the organization.

Organizations that approach AI primarily as a technological innovation project encounter friction with the regulatory framework, operational incidents and reputational tensions. By integrating AI from the outset into governance, internal controls, and risk management frameworks, organizations can scale more smoothly, achieve greater stability, reduce friction, and build greater trust from regulators, the market, and their own professionals.

Sustainable competitive advantage arises from a superior ability to govern AI: well-defined control frameworks, explicit responsibilities, continuous monitoring and an organizational culture that keeps human judgment at the center of critical decisions.

AI Regulation, Oversight and Standards

AI, a regulated activity

The rapid adoption of AI and the emergence of its associated risks have prompted an unprecedented global regulatory response. Unlike previous technology cycles, where regulation reacted with years of delay, AI is being regulated in parallel with its massive deployment, precisely because its systemic risks are already materializing.

Europe has taken the regulatory lead with the AI Act , the first comprehensive legal framework on AI. It is followed by significant initiatives in the US, China, the UK, Canada and other countries, along with a growing ecosystem of technical standards and voluntary frameworks that seek to operationalize principles of governance, security and ethics.

The consequence for organizations is clear: AI management is no longer a technological issue but a structural regulatory domain, comparable in impact to that of data protection, financial markets or operational security.

The AI Act: the most prescriptive regulation

The European AI Regulation (Regulation (EU) 2024/1689) establishes a risk-based regulatory model, structuring obligations according to the potential impact of systems on fundamental rights, security and public order.

The framework classifies AI systems into four main categories:

1. Unacceptable risk: prohibited systems (cognitive manipulation, social scoring, certain forms of biometric surveillance).

2. High risk: systems used in critical areas such as credit, employment, education, infrastructure, justice, healthcare, and biometrics. This is the core of the AI Act.

3. Limited risk: systems requiring transparency obligations.

4. Minimal risk: systems that are free to use under some general principles

For high-risk systems, the AI Act introduces a set of structural obligations:

- Documented risk management system

- Data governance and quality control

- Comprehensive technical documentation

- Logging and traceability

- Effective human oversight

- Accuracy, robustness and cybersecurity requirements

- Pre-deployment compliance assessment and ongoing post-market surveillance.

Penalties for serious non-compliance with the AI Act reach €35 million or 7% of annual global turnover, exceeding even the GDPR regime (which reaches 4%).

Supervision in Europe: from innovation to permanent control

The AI Act establishes a new institutional architecture:

- AI Office: central technical body of the European Commission for AI, especially GPAI.

- National supervisory authorities: supervise and sanction compliance with the AI Act in each country.

- AI Board: forum for coordination and common interpretation between the Commission and the national authorities.

- Cross-border cooperation mechanisms: rules for coordinating cases and investigations involving several Member States

Supervision will not be limited to one-off audits: a continuous surveillance model is established, with reporting obligations, serious incident management, withdrawal of unsafe systems and inspection powers comparable to those of financial regulators.

In practice, the AI Act's supervisory architecture is still under construction. The IA Board, the central coordinating body between Member States and the Commission, held its first formal meeting in September 2024, and has focused on organizational issues, codes of practice for AIFM models, and coordination of national authorities. The minutes reveal a process still in the organizational phase: selection of a chair, creation of subgroups and discussion on priority deliverables.

For their part, the national supervisory authorities have hardly been designated. Spain created the AESIA (Agencia Española de Supervisión de la Inteligencia Artificial) in August 2023, becoming the first AI authority in Europe, and started effective operations in February 2025 with oversight functions for prohibited systems. Other member states are in earlier stages of designating authorities.

The standards ecosystem: from principles to operation

In parallel to regulation, a web of technical and management standards that seek to establish norms for AI operational practice is consolidating; among them:

- ISO/IEC 42001: establishes requirements for an AI management system (AIMS), ISO 27001/9001-style, for governance and responsible use of AI.

- ISO/IEC 23894: provides a detailed AI risk management lifecycle framework, intended to integrate with enterprise risk management frameworks.

- ISO/IEC 5259: AI data quality framework and metrics.

- NIST AI Risk Management Framework: a framework for AI risk management, organized around the Govern–Map–Measure–Manage functions and designed to support trustworthy AI principles.

- OECD AI Principles: high-level principles (human values, transparency, robustness, accountability, inclusiveness) that have directly influenced the AI Act and other regulatory frameworks.

These standards play an essential role: they establish concrete controls, auditable processes, and verifiable metrics. For many organizations, they are becoming the technical foundation of their compliance programs.

Geopolitical fragmentation and operational complexity

The European risk-based and binding approach is not being replicated globally:

- The United States lacks a federal framework, relying instead on a fragmented sectoral approach by agencies (FTC, FDA, EEOC), with no equivalent to the AI Act. This is supplemented by executive orders, agency guidance, and regulation in some states (e.g., California, Colorado, Connecticut, Utah).

- China articulates AI within a state strategy of "digital sovereignty", with specific rules on algorithms, deepfakes and generative models, strong data control and compulsory licensing for certain high-impact systems.

- UK maintains a pro-innovation approach without a horizontal AI law, relying on sectoral authorities and common AI regulatory principles, supported by guidelines and regulatory sandboxes.

- Brazil passed a Senate bill in December 2024 structurally similar to the AI Act, featuring a risk-based approach (prohibited/high/limited/minimal), specific obligations for high-risk systems, fines up to R$50 million or 2% of turnover. The bill is pending approval in the Chamber of Deputies, with entry into force expected one year after enactment.

- Mexico lacks specific AI regulation: more than 60 bills have been submitted since 2020 without approval. In February 2025 a constitutional reform was introduced to grant the federal government competence over AI, which should lead to a General Law. Until then, there is no specific legal framework, only voluntary guidelines aligned with UNESCO and OECD principles.

- Australia maintains a voluntary and principles-based approach: AI Ethics Principles (2019, eight voluntary principles) and Guidance for AI Adoption (October 2025, replacing the Voluntary AI Safety Standard of 2024). There is no binding legislation specific to AI, and the government has instead focused on reinforcing existing laws (privacy, consumer protection, sectoral).

Strategic implications

Studies agree that the divergence between these models (EU more prescriptive, US fragmented and sectoral, China highly centralized, UK and Australia more principle-based) forces global companies to segment products, models and compliance processes by jurisdiction.

This translates into multi-level AI architectures (governance, data, MLOps, documentation) designed to simultaneously map and reconcile divergent requirements, which increases operational complexity in unprecedented ways for many traditionally less regulated sectors.

AI and Cybersecurity

A battle with new rules and new attack surfaces

AI is radically transforming cybersecurity along three simultaneous dimensions: it amplifies attackers' offensive capabilities, boosts organizations' defenses, and at the same time introduces new vulnerabilities that require targeted protection.

Offensive AI: automation and industrial-scale adaptation

Attackers have adopted AI with astonishing speed, transforming traditional methods into qualitatively different threats. The impact is quantifiable : more than 28 million AI-powered cyberattacks were reported globally in 2025, an increase of 47% year-on-year; the financial sector was the most affected, with 33% of these attacks; and 87% of organizations experienced at least one AI-assisted attack in the past 12 months.

Hyper-personalized phishing on a massive scale. AI-generated phishing attacks increased by 1,265% in one year since the launch of ChatGPT , and more than 80% of phishing emails now use language models for text generation . The qualitative difference is dramatic: while traditional phishing achieves 12% success rates, AI-generated campaigns achieve 54% click-through rates . LLMs enable the creation of personalized messages by analyzing public profiles, corporate writing style and victim-specific contexts, at speeds and volumes impossible manually.

Adaptive polymorphic malware. 76% of malware detected in 2025 exhibited AI-powered polymorphic characteristics. Unlike traditional polymorphic malware (which mutates through routine obfuscation or encryption), AI-generated malware dynamically rewrites its code in real time, maintaining identical functionality, but with completely different signatures. Some advanced variants generate unique versions every 15 seconds during an attack. This defeats static signature-based detection systems, which have historically been the basis of traditional antivirus.

More worrisome: AI not only generates variants but adapts behavior. Machine Learning models embedded in malware analyze the execution environment, detect monitoring systems and adjust their tactics in real time to evade detection.

Deepfakes and identity manipulation. According to cybersecurity analysis based on IC3 2025, AI-assisted Business Email Compromise (BEC) attacks would have increased by about 37%, combining synthetic text, audio and video to impersonate executives. The most notorious case: an audio deepfake of the Italian Defense Minister that caused significant financial losses. In a survey, 85% of organizations reported having experienced some deepfake attack in 2025.

Dark LLMs and specialized offensive tools. Modified language models specifically for cybercrime have proliferated: HackerGPT, WormGPT, GhostGPT, FraudGPT. These systems, created by jailbreaking ethical models or modifying open-source models, are marketed on dark web forums with subscription models and technical support. They generate malicious scripts, exploits, and social engineering campaigns without ethical restrictions.

Defensive AI: behavioral detection and automated response

Organizations are responding with equally sophisticated defensive AI. Fifty-one percent of enterprises now use AI or automation in security, and adoption is accelerating rapidly in the face of evidence of demonstrable ROI.

Behavioral analysis and anomaly detection. AI-powered User and Entity Behavior Analytics (UEBA) systems establish dynamic baselines of normal behavior for users, devices and applications by analyzing billions of daily events. Instead of looking for known signatures, they detect subtle deviations from established patterns. This capability is critical against polymorphic malware and zero-day attacks: in high-risk environments, AI-based systems achieve detection rates of up to 98%. against known threats or those exhibiting recognisable anomalous patterns. Against genuinely novel attacks—with no prior signature or behavioural pattern—behaviour‑based detection reduces risk but does not eliminate uncertainty: defensive Artificial Intelligence does not recognise the new threat itself, but rather its deviation from the norm. This means that attacks sufficiently cautious, or intentionally designed to mimic legitimate behaviour, may evade that initial detection.

SIEM/XDR/SOAR platforms with integrated AI. Current Security Information and Event Management (SIEM), Extended Detection and Response (XDR) and Security Orchestration, Automation and Response (SOAR) platforms natively integrate AI to correlate events between disparate systems, reduce false positives (up to 95% reduction in mature deployments), and automate response. CrowdStrike reports that its Falcon platform analyzes 4.7 billion events daily with 24/7 AI-powered threat hunting. Microsoft Sentinel has demonstrated 30% reductions in mean response time (MTTR) through AI-based correlation and behavioral analysis.

Demonstrable economic impact. According to IBM, organizations that use AI and automation extensively in security reduce average breach costs by $1.9 million (more than 50% less than organizations without AI) and shorten containment cycles by 80 days on average. Organizations with AI-driven platforms detect threats 60% faster and achieve 95% detection accuracy.

Multiplying capabilities. AI acts as a capability multiplier for security teams: it automates alert triage (organizations face an average of 4,500 alerts per day), runs automatic response playbooks for known threats, and enables junior analysts to operate at higher levels of effectiveness. Ninety-five percent of security professionals report that AI improves their speed and efficiency in preventing, detecting, responding to and recovering from attacks.

Asymmetry and the strategic dilemma

Despite advanced defensive capabilities, a troubling asymmetry persists: most companies currently lack sufficient maturity to counter advanced AI-powered threats. 78% of CISOs claim that AI-powered threats now have "significant impact" on their organizations.

Cybersecurity has become a battle of AI versus AI, with both sides operating at machine speeds. Attackers automate reconnaissance, generate custom exploits, and adapt their tactics in real time. Defenders correlate terabytes of telemetry, predict attack vectors, and execute autonomous containment. The competitive differential no longer lies in having AI, but in the sophistication of models, the quality of training data, the speed of threat intelligence updates, and the ability to integrate across attack surfaces.

Securitizing AI: vulnerabilities of its own

Beyond the battle between offensive and defensive AI, a third critical dimension emerges: the securitization of AI systems themselves, which introduce unprecedented attack vectors in traditional software. AI models are vulnerable to specific adversarial attacks: training data poisoning, where attackers inject malicious data to degrade the model; adversarial evasion through imperceptible perturbations that fool the model during inference; and model mining via repeated queries to steal intellectual property.

LLMs add additional vectors documented by OWASP: prompt injection (malicious instructions embedded in inputs that alter model behavior), insecure output handling (applications that blindly rely on outputs without validation), training data poisoning, model denial of service, and supply chain vulnerabilities. In 2024, NIST published specific guidelines for secure development of generative AI , extending its SSDF with differentiated controls for each phase of the lifecycle. Effective securitization requires rigorous dataset curation, adversarial robustness testing, input/output validation, sandboxing, and ML-specific network teaming. Security teams must incorporate expertise in adversarial attacks; governance frameworks must contemplate AI as an independent attack surface with proprietary controls.

Global cybercrime reaches trillions annually. Organizations must maintain robust governance: defensive AI systems themselves are now targets for attacks (model poisoning, prompt injection, adversarial evasion), creating a meta layer of risk that requires specialized protection.

AI, Privacy and Intellectual Property

A structural conflict

The mass adoption of generative AI reopens fundamental debates about privacy and intellectual property, pitting business models that rely on massive data against legal frameworks designed for minimization and individual control. The tension is not merely technical: it reflects a structural clash between the operational logic of LLMs and the principles governing data protection and copyright.

Privacy in LLMs: systemic risks throughout the lifecycle

In April 2025, the European Data Protection Board (EDPB) published a comprehensive report on privacy risks in LLMs, developed under its Support Pool of Experts program. The document identifies that each phase of the LLM lifecycle introduces specific privacy and data protection risks:

- Unintentional storage and leakage of personal data. LLMs can memorize fragments of personal data present in training datasets and reproduce them later in generated outputs. This phenomenon is not an occasional bug, but an intrinsic behavior: the model stores statistical patterns that include sensitive data. The EDPB has documented cases where specific prompts have managed to extract personal information (names, emails, phone numbers, medical data) that were in the training data. The scale of the problem grows with both the size of the model and the sensitivity of the data it processes.

- Unintentional re-identification and profiling. Although data are anonymized prior to training, inference techniques can re-identify individuals by combining multiple model outputs. The EDPB warns that LLMs can generate detailed profiles of individuals without explicit processing of personally identifiable data, which violates GDPR principles of minimization and finality.

- Feedback loops without safeguards. User interactions with chatbots are frequently stored for subsequent fine-tuning of models, so sensitive data revealed in conversations is incorporated into the model without explicit consent or guarantees of subsequent deletion.

- Structural incompatibility with GDPR.. The EDPB report highlights irresolvable tensions with GDPR principles:

o Data minimization: LLMs require massive datasets, which is in direct contradiction to minimization.

o Right to be forgotten: robust methods to selectively "untrain" models do not yet exist (emerging techniques such as machine unlearning are in experimental phase).

o Transparency: transformer architectures are black boxes where tracing the origin of specific outputs is technically complex.

o Consent: data scraped from the internet rarely comes with consent for use in AI training.

- Mandatory impact assessment. The EDPB concludes that, given the systemic nature of processing in LLMs, performing Data Protection Impact Assessment (DPIA) according to GDPR Art. 35 is not only recommended but mandatory in most cases, especially when LLMs process sensitive data or make decisions that affect individuals.

- Technical mitigations with cost. The report proposes measures such as differential privacy (adding statistical noise to prevent identification), federated learning (training models without centralizing data), Retrieval-Augmented Generation (RAG, which separates updatable knowledge from static LLM memory), and retrospective logging minimization (minimizing data in system logs). However, all these techniques imply reduced accuracy, high computational cost, or reduced functionality.

Intellectual property: massive litigation and infrastructure collapse

The World Intellectual Property Organization (WIPO) has devoted multiple sessions of its "WIPO Conversation on IP and AI" to analyzing the impact of generative AI on copyright. The latest sessions focused specifically on infrastructure for rights management, attribution and compensation in the era of generative AI.

Litigation is multiplying; to cite a few examples:

- New York Times vs. OpenAI/Microsoft: the NYT sues over the use of millions of articles without consent, arguing that the models create market substitutes that divert traffic away from its paywall, and generate hallucinations that damage its reputation. The claims amount to billions of dollars in damages.

- Getty Images vs. Stability AI: Getty alleges non-consensual use of over 12 million photographs to train Stable Diffusion, including trademark infringement (since the model replicates Getty's watermark).

- Artistas vs. Midjourney/Stability AI: artists argue that their works were scraped without permission to train image-generating models.

- Discográficas vs. Anthropic: Universal Music Group (UMG) and other record labels are suing for massive infringement of the use of song lyrics.

As of December 2025, more than 72 active copyright lawsuits against AI companies are ongoing. So far, three judges have issued preliminary rulings on fair use: two rulings favorable to AI companies, one contrary. Final decisions are not expected until summer 2026 at the earliest.

Ownership of outputs: a legal vacuum: Who owns AI-generated content? Most jurisdictions (including the US Copyright Office) hold that copyright requires human authorship with "sufficient creative input". Content generated purely by AI without significant human intervention falls into the public domain. But the boundaries are fuzzy: how much human intervention (prompt engineering, selection, post-editing) is "sufficient"?

Collapse of copyright infrastructure. WIPO warns that collective rights management systems, designed for manageable volumes of human works, collapse in the face of the trillions of outputs generated by AI daily. Scalable infrastructures for tracking, attribution and clearing do not exist. Recent WIPO sessions explored the need for new technical infrastructures and regulatory frameworks, but practical solutions remain speculative.

Fragmentation and lack of global consensus

Privacy-preserving technologies (differential privacy, federated learning, homomorphic encryption) offer technical routes to reconcile privacy with AI utility, but are far from mass adoption: they are costly, complex, and reduce performance. The tension between accelerated technological innovation and legal frameworks designed for earlier paradigms remains, for now, unresolved.

Table ofcontents

Introduction

Executive Summary

The Technological Explosion of AI

Case Study: GenMS™ Sybil

Conclusions

References & glossary