Trends in AI

The Technological Explosion of AI

Vídeo: Trends in AI

Table of contents

Complete document

Access to GenMS™ Sybil

AI systems have crossed critical thresholds in recent years: they no longer just communicate, they execute; they no longer just suggest, we start delegating decisions to them; and they no longer just operate in digital environments, they act on the physical world. What began as a personal productivity tool has evolved into operational infrastructure capable of automating complex, end-to-end cognitive processes. This section examines five technological trends that are redefining the limits of what is possible. They are not describing the future: they are describing the operational reality of organizations already capturing structural competitive advantages through AI.

Democratization of Multimodal Generative AI

The transformation of intellectual work

In less than five years, generative AI has gone from technology experiment to business productivity infrastructure. Tools such as Microsoft Copilot, ChatGPT Enterprise and Claude Enterprise have been massively deployed in workplaces around the world: according to Satya Nadella, Microsoft Copilot is present in 90% of Fortune 500 companies and has surpassed 150 million users; and ChatGPT is estimated to be approaching 900 million users.

This speed of adoption is unprecedented in enterprise technologies: neither the cloud, nor mobile devices, nor collaborative platforms reached this penetration with such speed.

What is happening is not an incremental improvement of existing tools, but a reconfiguration of how intellectual work is done. Tasks that used to take hours (writing reports, analyzing lengthy documents, generating code, creating presentations, synthesizing scattered information) can now be completed in minutes, producing an initial useful output through conversational interaction with AI systems.

Effective adoption cases

Productivity improvements are already visible. To cite a few cases:

- In standard office tasks, productivity gains of 10% to 13% in document editing, 11% reductions in email processing time, and 23% faster resolution of IT security incidents have been measured.

- Studies show that scientists using large language models (LLMs) publish up to 50% more scientific papers than before using these tools.

- Empirical studies show that business process automation with generative AI can reduce corporate document processing time by more than 80%, while also lowering error rates.

- In customer service, the introduction of conversational assistants based on generative AI enabled 14% more incidents to be resolved compared to traditional processes.

- A systematic review of AI adoption in the workplace finds consistent increases in efficiency and productivity across a range of tasks following the adoption of generative AI, while also warning of certain risks associated with its use.

Multimodality as a qualitative leap forward

Current models integrate text, images, audio, video and code into a single general‑purpose architecture, a trend that coexists with the parallel development of highly specialized models that outperform general‑purpose systems in specific domains. A user can upload a picture of a financial scorecard drawn on a whiteboard and receive the full code needed to reproduce it; can dictate a complete presentation while the system simultaneously generates slides with graphs and charts; can provide a regulation and obtain a podcast explaining it, or ask the system to analyze a video of a meeting and extract decisions, commitments, and deadlines, for example.

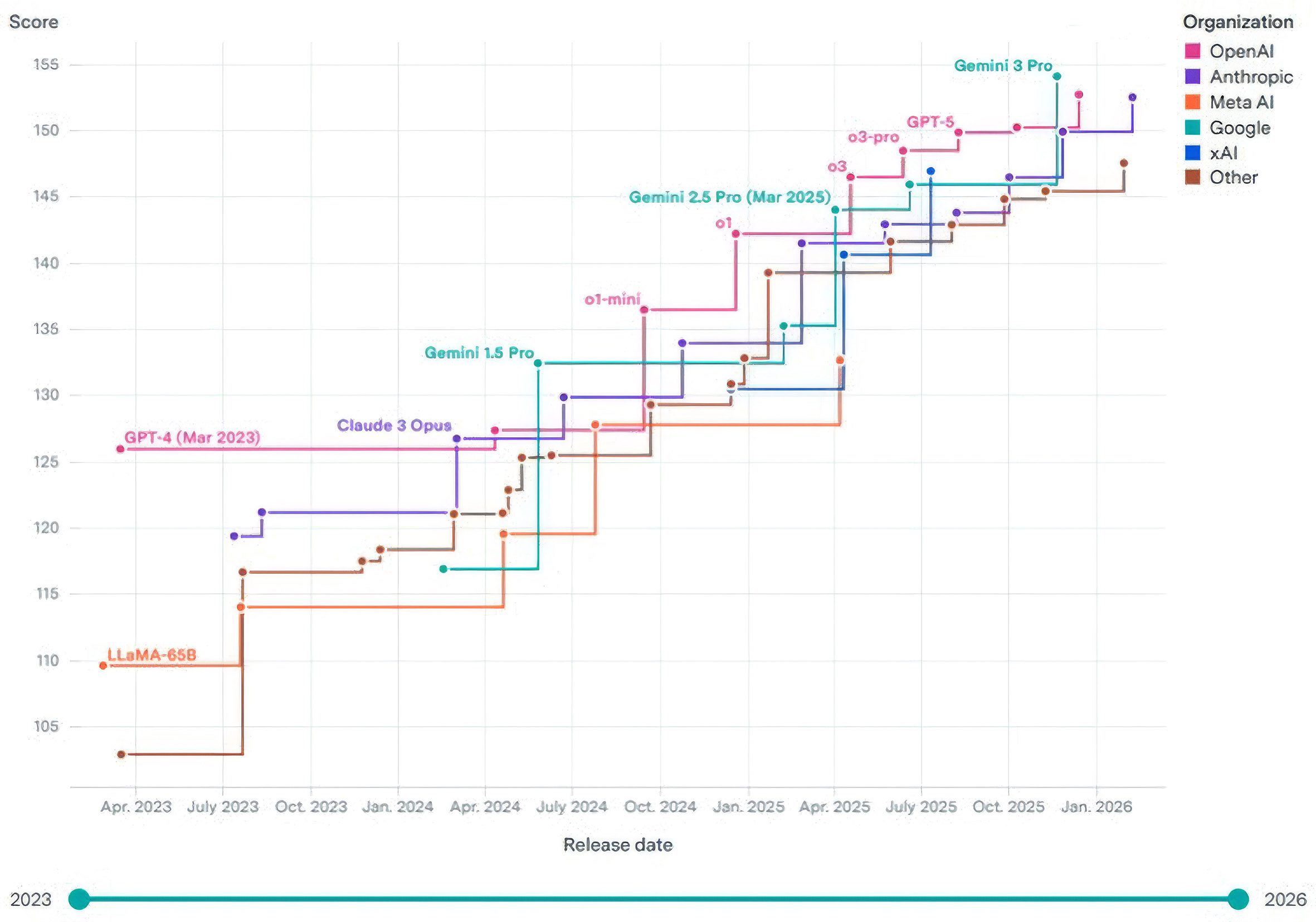

This multimodal convergence is not minor: it redefines the notion of what tasks are automatable. Tasks that previously required multiple specialized tools (e.g., audio transcription, text analysis, graphics generation, report writing) are now solved in a single conversational interaction. The capabilities of these systems continue to increase quarter by quarter with no signs of slowing (Fig. 1), implying that the impact on speed and accessibility will continue to amplify.

Democratization: from technical experts to non-technical profiles

The conversational interface eliminates barriers to entry and goes beyond the concept of "citizen data scientist" (non-technical professionals capable of performing basic analysis with visual tools) to deliver analysis, code generation and advanced processing capabilities directly to end users, without the need for technical training.

This democratization, however, has a double edge: on the one hand, it frees productive capabilities previously limited to specialists; on the other, it spreads risk: now any employee can, without technical supervision, generate content, code or analysis that the organization could use in critical decisions. The key question is not whether to democratize access, but how to govern use on a massive scale.

The problem of verification at scale

Generative AI produces, by design, outputs that are plausible but not necessarily correct, and the root of this issue is structural: these systems are statistical models that predict the most probable continuation of a sequence, not mechanisms that verify the truthfulness of their outputs. This creates a dangerous asymmetry: generating content is instantaneous, while verifying it requires time, expertise, and discipline. In October and November 2025, it was reported that a large consulting firm had delivered two reports to governments containing fabricated or inaccurate citations and references, forcing refunds and corrections and causing significant reputational damage. The reports had been produced with the assistance of generative AI without rigorous verification.

The risk is not in the tool, but in the work processes that do not validate results and sources. A well-intentioned professional can introduce catastrophic errors if they blindly rely on AI outputs without cross-checking them; and this point can only be mitigated with AI awareness and literacy.

AI literacy as a regulatory requirement

Article 4 of the European AI Regulation (AI Act), states: "Providers and those responsible for the deployment of AI systems shall take measures to ensure that, to the greatest extent possible, their personnel [...] have a sufficient level of AI literacy, taking into account their technical knowledge, experience, education and training, as well as the intended context of use of the AI systems and the persons or groups of persons on whom the AI systems are to be used."

This is not a recommendation: it is a legal obligation effective February 2, 2025. Organizations that treat this as a compliance checkbox are accumulating operational and reputational risk. Those that approach it as a cultural transformation, integrating continuous learning and communities of practice, are sustainably capturing value.

The inevitability of adoption

The reality is that this technology is already transforming the way we work, with or without organizational support. If companies do not provide secure corporate tools and adequate training, employees will use uncontrolled alternatives: personal accounts on public platforms, free tools with no privacy guarantees, untraceable systems. Studies indicate that up to 35% of the data professionals upload to unsecured chatbots is confidential. Shadow AI is not a future threat: it is a reality today.

Finally, at the individual level, AI literacy is no longer optional. Professionals who master these tools (i.e., understand when and how to use them, how to verify their outputs, and how to integrate them into complex workflows) will have structural competitive advantages over those who do not. The way of working has changed irreversibly, making adaptation essential for professional and organizational competitiveness.

Fig. 1. Continuous improvement of LLMs, as measured by a synthetic capabilities index. Source: Epoch (2025a).

|

Machine Learning Accelerated by Generative AI

The persistence of Machine Learning

While generative AI is grabbing headlines, classical Machine Learning (ML) continues to be the backbone of critical applications in sectors such as banking, insurance, retail, logistics, energy and telecommunications. Credit scoring models, fraud detection, demand prediction, inventory optimization, predictive maintenance, customer segmentation and recommendation engines continue to operate using algorithms (logistic regression, random forest, gradient boosting, neural networks...) trained on structured historical data. These systems do not generate content like generative AI: they classify, predict and optimize based on learned patterns.

Generative AI does not replace these models: it makes them faster to develop, easier to document, more efficient to validate and simpler to deploy.

The traditional ML lifecycle: costly and time-consuming

Developing a classic ML model has traditionally been time-intensive for specialized professionals. A typical predictive model requires feature engineering, data preparation and cleaning, algorithm selection and training, rigorous validation, thorough documentation, and deployment on production infrastructure with continuous monitoring. This cycle can extend for months, and each iteration or model update replicates much of the effort.

Generative AI is involved in each of these phases, and has been shown to significantly reduce time requirements, freeing up the most skilled professionals to focus on strategic work. It therefore does not improve them directly in statistical terms; rather, it transforms the work of the teams that build, document, validate and deploy them. The acceleration lies in the human development cycle, not necessarily in the predictive performance of the resulting model.

Accelerating feature engineering

Feature engineering (the process of constructing predictive variables from raw data) is one of the most knowledge-intensive aspects of data science. A data scientist must combine business understanding, statistical intuition, and iterative experimentation to determine which variables are relevant. Generative AI can help accelerate this process:

- Automated variable generation: an LLM can formulate dozens of candidate variables from descriptions of the business problem and the structure of available data.

- Translation of business logic to code: an analyst can describe complex logic in natural language (e.g., "I want to capture revenue volatility over the last 12 months adjusted for seasonality") and receive the corresponding SQL or Python code in seconds.

- Data pipeline optimization: Generative AI can review data preparation scripts and identify inefficiencies.

Preliminary studies indicate that generative AI-assisted data scientists "save weeks of manual feature engineering work, improving the performance of various predictive models in multiple business scenarios".

Automated documentation and regulatory compliance

ML models in regulated industries must be thoroughly documented. In banking, for example, supervisors require each regulated model to include documentation covering its purpose, the data used, statistical methodology, validation process, backtesting results, known limitations, and monitoring plan, among other elements. Producing this documentation is tedious and time-consuming for highly skilled profiles, and updates are therefore prone to inconsistencies.

Generative AI can automate much of this process: generate technical documentation from code, translate technical descriptions into summaries understandable to non-technical committees or auditors, and automatically update documentation when a model is retrained with new data.

Assisted validation and bias detection

Validation of ML models is critical and regulatory mandated in many sectors. It includes verifying the statistical robustness of the model, evaluating its behavior in extreme scenarios, and detecting unwanted biases. Generative AI can assist by automatically generating and running complete batteries of relevant statistical tests, evaluating algorithmic fairness using fairness metrics, and proposing and running realistic stress scenarios.

Industrialized deployment and monitoring

Once validated, the model must be deployed in production environments and continuously monitored for performance degradation (model drift). Generative AI accelerates this phase as well: it can generate infrastructure code (Docker, Kubernetes, CI/CD pipelines) to deploy models in a reproducible and scalable way, produce performance metrics dashboards, set up automatic alerts when anomalies are detected, and generate automatic retraining scripts.

Classical ML is not going away: it is industrialized

Generative AI does not replace traditional Machine Learning, but it does radically transform its lifecycle. Tasks that used to take weeks (feature engineering, documentation, validation) are now solved in days. This does not mean that data scientists are expendable: it means that they can spend more time on strategic work (understanding the business problem, designing innovative model architectures, interpreting results) and less on mechanical tasks (writing repetitive code, writing standard documentation, or running routine tests).

The net result is an acceleration of ML model time-to-market, a reduction in operational costs, and an improvement in the quality and traceability of deployed systems. For organizations that rely heavily on predictive models, this acceleration can translate into significant competitive advantages: the ability to launch customized products faster, to adapt strategies in real time, and to meet regulatory requirements with less operational friction.

Industry note: banking supervisors and ML

For years, European financial institutions avoided using ML in regulated models (particularly in IRB models for regulatory capital calculation) because they believed supervisors would reject them due to explainability concerns. This perception is now changing.

In 2021, the EBA published a discussion paper on ML in IRB models where it recognized that "Machine Learning techniques have the potential to enhance risk differentiation in IRB models" and set out a set of principles-based recommendations to ensure prudent use of ML in this context. The document did not discourage the use of ML; on the contrary, it set out how to make it compatible with existing regulation (CRR).

Practice confirms this: several European institutions have submitted ML‑based IRB models (for example, for SME portfolios) to the ECB and have obtained approval, provided they adequately justify explainability through techniques such as SHAP values, sensitivity analysis or decision decompositions. Explainability in ML is not an insurmountable barrier: techniques such as SHAP or LIME allow institutions to justify model decisions to supervisors with sufficient rigour. However, it remains only partially resolved: current XAI methodologies work well for technical and regulatory audiences, but translating those explanations into terms understandable to a retail customer or an executive committee remains an open challenge.

Vibe Coding and Augmented Software Development

From traditional programming to cognitive dialog with machines

Software development has historically evolved through leaps of abstraction: from assembly programming to high-level languages, from imperative code to declarative frameworks, from manual development to low-code platforms. Each transition eliminated unnecessary technical complexity and brought software creation closer to human intent.

Generative AI represents a distinct qualitative leap: it turns programming into an iterative conversation with cognitive systems. The programmer no longer writes code line by line: he or she describes the desired behavior in natural language, and the system generates, tests, corrects and documents it. This phenomenon, dubbed "vibe coding" by Andrej Karpathy, redefines what it means to program: the developer moves from writing syntax to directing cognitive systems that materialize intent into functional software. In Karpathy's words, "vibe coding is going to terraform software and alter job descriptions".

What is vibe coding really?

Vibe coding is not simply auto-completion of sophisticated code; it is software development through iterative natural language interaction with AI models that maintain memory, context and high-level goal understanding.

Its key components include:

- Semantic problem understanding: the system interprets requirements expressed in natural language and translates them into technical architecture.

- Multi-file code generation: the system produces not just fragments, but complete applications with coherent modular structure.

- Assisted execution, debugging and refactoring: the system executes code, detects errors, proposes corrections and optimizes implementations.

- Automatic production of tests and documentation: the system automatically generates test batteries and technical documentation synchronized with the code.

- Hidden fragility: generated code may work superficially, but it can contain inefficiencies, vulnerabilities, or unstable dependencies that only surface under production load or in extreme cases. To this we must add an earlier risk in the chain: ambiguous or poorly formulated specifications which, in the past, a senior developer would have challenged or sensibly reinterpreted are now executed literally, without any corrective friction, silently propagating the error from the requirement through to the final product.

- Model dependency: if the AI system that generated the code disappears or changes significantly, the ability to maintain or extend the software is degraded.

- Systemic bugs replicated at scale: a bug in the prompt or model logic can instantly propagate to dozens of projects, multiplying the impact of bugs that previously would have been isolated.

- Prompt repositories: catalog, version and audit instructions that generate critical code, as the prompt becomes a risk surface.

- Intention version management: record not only what code was produced, but what objective was pursued and what restrictions were defined.

- Autonomy and permissions control: explicitly limit what actions AI can perform (repository modification, deployments, commands, data access).

- Traceability of design decisions: document which decisions were human, and which were proposed or executed by the AI.

- Validation and assisted review: conduct systematic review of diffs, automatic tests and behavioral audits before accepting changes in production.

- State and memory: maintains persistent context between interactions, not just within an isolated conversation.

- Dynamic planning: decomposes complex objectives into subtasks, prioritizes them and replans according to results.

- Execution on real systems: uses tools and APIs to modify data, execute commands and complete transactions.

- Multi-agent orchestration: coordinates specialized agents under a central coordinator that manages dependencies and information transfer.

- Full traceability: produces logs, evidence and justifications of each executed step, enabling auditing and supervision.

- Deutsche Bank: is deploying an AI voice-enabled agent ("AI banking butler") that acts as a proactive conversational agent and covers everything from care and support to transaction execution and advisory services. The program is part of a technology investment of approximately 600 million euros, with a recurring savings target of around 300 million euros per year by 2028, and is expected to result in a workforce reduction of roughly 10%.

- Ryt Bank: Malaysian bank that advertises itself as "AI-first", operates on an architecture of specialized agents where the customer interacts in natural language and the agents interpret the intention, orchestrate processes and execute real transactions on the banking core. The system handles around 80,000 transactions per month, has reduced processes that required 5-8 screens to a single conversational interaction, and has shown significant improvement in customer retention.

- Walmart: in its automated distribution centers, autonomous decision systems coordinate real-time stocking, replenishment and order picking. Walmart reports that these centers double processing capacity with approximately half the staff versus traditional centers, evidencing a structural shift in logistics productivity.

- Amazon: its new logistics management system, Sequoia, orchestrates robots, inventory and order flows through autonomous software that decides where to store, when to move inventory and how to stock pick stations. Amazon reports reductions of up to 75% in inventory handling time and up to 25% in order processing time at centers where is deployed.

- DHL: in picking operations with autonomous robots integrated with the WMS, systems automatically assign work, optimize routes and close tasks. In productive deployments, DHL has reported productivity increases of up to 180% or more in units per hour, along with significant improvements in quality and accuracy.

- 1. Interface and perception: receives user goals, delivers end results, and perceives events from the environment via API gateways, endpoints, and input connectors.

- 2. Orchestration and scheduling: decomposes complex goals into manageable tasks, decides which agent executes each task and in what order, and manages priority queuing and routing.

3. Agent core: decomposes complex goals into manageable tasks, decides which agent executes each task and in what order, and manages priority queuing and routing.

- 4. Tools and services: library of external capabilities (search, code generation, corporate APIs) with standardized connectivity through protocols such as MCP and prompts management.

- 5. Memory and knowledge: stores short-term information (conversation history), long-term information (vector database with past experiences) and corporate knowledge base.

- Technology and development: multi-agent orchestration with effective coordination, operational and long-term memory management, modular architectures that allow maintenance and evolution, and secure integration with corporate systems through standards such as MCP.

- Continuous evaluation: performance, quality and efficiency metrics, monitoring of deviations and anomalies, cost control (calls to models multiply exponentially) and full traceability of actions and decisions.

- Governance and compliance: human oversight through approval and escalation mechanisms, (bearing in mind that human supervisory capacity has a ceiling; once that limit is exceeded, supervision becomes nominal and creates a false sense of control), explicit controls and limits on what actions each agent can execute, transparency and functional explainability of decisions, and compliance with the AI Act and internal risk policies.

- Industrialization and operating model: 24/7 operation with continuous maintenance, CI/CD pipelines to securely deploy and update agents, active cost management in production, and resilience to failures or unexpected behaviors.

- Transformation of the operating model: agentic AI introduces a structural change where human teams collaborate with autonomous agents operating 24/7 without continuous supervision. It radically compresses time-to-market: tasks that used to take weeks are now solved in days through delegation to specialized agents.

- Risk of cost escalation: unlike traditional generative AI, agents multiply calls to models and tools. A viable prototype can become an economically unsustainable system if it is not designed with cost controls from the start.

- Industrialization as a barrier to entry: building agentic prototypes is possible with current frameworks, but operating, scaling, maintaining, securing and auditing them in production requires organizational capabilities that most companies have not yet developed. The competitive advantage is not in technology, but in the ability to industrialize it with effective governance.

The difference from traditional code assistants is fundamental: traditional tools complete lines or functions, whereas vibe coding systems understand objectives, maintain architectural coherence across extended sessions, and act as cognitive collaborators rather than passive tools.

Acceleration and Democratization of Software Development

The impact of AI-assisted coding on development speed is both measurable and substantial. A field study with 4,867 developers found that task completion rate increased by 26%. An experiment with 187,489 developers showed that they spent 12.4% more time on core programming activities, while reducing time spent on project management and administrative tasks by 24.9%. In other words: projects that used to take months are now completed in weeks or days.

Another disruptive effect is the equalizer: AI narrows the productivity gap between junior and senior developers. While junior developers experience productivity gains of 21% to 40%, senior developers improve by 7% to 16%. This does not mean experience no longer matters; rather, the development bottleneck is shifting. Success now depends less on mastering syntax and more on understanding problems, designing robust architectures, and formulating constraints accurately.

This shift in the skills gap has a direct organizational consequence for those that act on it: the profound democratization of software creation. Business analysts, product managers, consultants and scientists are generating functional prototypes without relying on engineering teams as intermediaries. Software is no longer the exclusive domain of specialized technical profiles. The barrier to entry has moved from knowledge of programming languages to the ability to grasp problems, define objectives, and specify constraints clearly.

The net result is a structural reduction in the marginal cost of creating software, leading to a change in the economics of technology production.

Transformation of technology teams

Within technology organizations, the distribution of roles is changing rapidly. Teams need fewer "low-level programmers" writing routine code, and more system architects, solution designers, quality validators and technical risk auditors. The role of senior developers is evolving: they spend less time on syntax and more on governing architecture, security, scalability and technical debt management.

Research shows that AI-assisted teams require 79.3% fewer contributors per project on average, without sacrificing technical complexity. Small teams are producing systems of a scale and sophistication previously reserved for large engineering departments. In addition, exploration of new technologies is up 21.8%, suggesting that developers are freeing up cognitive capacity to learn, experiment, and expand their technical capabilities.

Quality, technical debt and risk

However, speed has hidden costs. Generating code is instantaneous; maintaining, debugging and scaling it remains difficult. Vibe coding introduces new risk vectors:

The nature of technical debt is also changing. Before, technical debt was primarily code debt: fast implementations, postponed refactoring, duplication of logic. Now, technical debt includes architecture debt (design decisions implicit in interactions with the AI), prompts debt (poorly formulated instructions that generate suboptimal but functional code), and traceability debt (loss of understanding of why the code does what it does).

Software governance in the age of vibe coding

If anyone can create software through AI conversation, organizational risk multiplies. The problem is no longer just what code exists, but what cognitive system produced it, under what instructions and with what degree of autonomy.

Leading security and development frameworks are already warning of this change. OWASP identifies new structural risks in LLM-based applications, such as prompt injection, insecure output handling and excessive agency: giving an AI system the ability to act without sufficient controls.

At the same time, NIST insists that the classic principles of secure development (traceability, review, testing, change control, continuous validation) must also apply to AI-generated content and the mechanisms that turn it into executable changes.

Consequently, software governance ceases to be solely code governance and becomes cognitive systems governance, forcing the introduction of new layers of control:

Agent-based development tools themselves already explicitly recommend these guardrails (change review, permission control and caution with automatic execution), reflecting that the risk is not theoretical: the attack and failure surface has shifted from isolated code to the full intent > generation > execution loop.

Strategic implications

Vibe coding is not just a technology trend; it is a macroeconomic variable. Organizations that master this capability operate with structural competitive advantages: extremely compressed time-to-market, massive low-cost experimentation, and accelerated organizational adaptability.

For traditional companies, the implication is clear: they are competing against organizations that iterate ten times faster, with teams ten times smaller, and with marginal costs of development that tend to zero. The speed of software creation goes from being an internal operational metric to a determinant of competitive survival.

Agentic AI and Autonomous Systems

From conversational assistants to autonomous operators

Generative AI has transformed intellectual work by enabling professionals to generate content, analyze information and obtain answers through natural conversation. However, these systems remain fundamentally reactive: they respond to prompts, but do not act independently on real systems. Agentic AI represents a qualitative leap: systems that plan, execute complex tasks, make decisions within defined boundaries and operate on corporate infrastructures with full traceability.

An agentic system operates through autonomous agents, each with specific capabilities (reasoning, memory, tool usage, planning), that collaborate to achieve a defined goal. The incremental capabilities of agentic AI over generative AI are structural:

Agentic AI in production

Agentic AI operates today in global organizations that manage millions of daily transactions. To cite a few examples:

But what is an agentic system? Five modular layers

These functional capabilities materialize in a specific modular architecture (not simply a language model with tool access) composed of five interdependent layers:

This architecture turns autonomy into something traceable, auditable and governable. Every decision, every action, every invocation of tools is recorded, enabling effective human oversight and regulatory compliance.

MCP: the missing link to scalability

Agentic architecture faces a fundamental technical challenge: the exponential complexity of integrations. Traditionally, each language model requires a proprietary integration with each tool. Changing models requires rewriting all integrations, and adding a new tool requires integrating it with all existing models. The result is exponential technical debt.

Model Context Protocol (MCP) solves this problem through a universal abstraction layer. MCP is an open protocol that standardizes how AI models interact with external applications, data sources and tools. MCP servers expose capabilities, such as resources, prompts, and tools, that MCP clients (agents or models) can consume on demand. Once a tool is connected to MCP, it becomes immediately accessible to any current or future agent, without the need to redevelop integrations.

The impact of MCP is transformative: it moves from hand-crafted integrations to universally reusable assets, facilitates the transition from isolated prototypes to scalable ecosystems, enables agents to acquire new capabilities without redeployments, and dramatically reduces the cost of maintenance and evolution. Without MCP or equivalent standards, agentic AI at enterprise scale is not technically sustainable.

Governance and control: the real challenge

Building agents is relatively straightforward with current frameworks; governing them at enterprise scale is the real challenge. Sustainable adoption requires balancing four capabilities:

Strategic implications

The adoption of agentic AI has three critical strategic implications:

AI in Robotics and Physical Systems

From digital to real-world action

For years, industrial robotics has operated through systems programmed for repetitive tasks in highly controlled environments: robotic arms that assemble components following fixed sequences, automated guided vehicles (AGVs) that follow predefined routes, or pick-and-place systems that recognize objects in exact positions. These robots execute precise movements but lack adaptability: any change in the environment (a misplaced object, a variation in texture, an unexpected obstacle) requires reprogramming or human intervention.

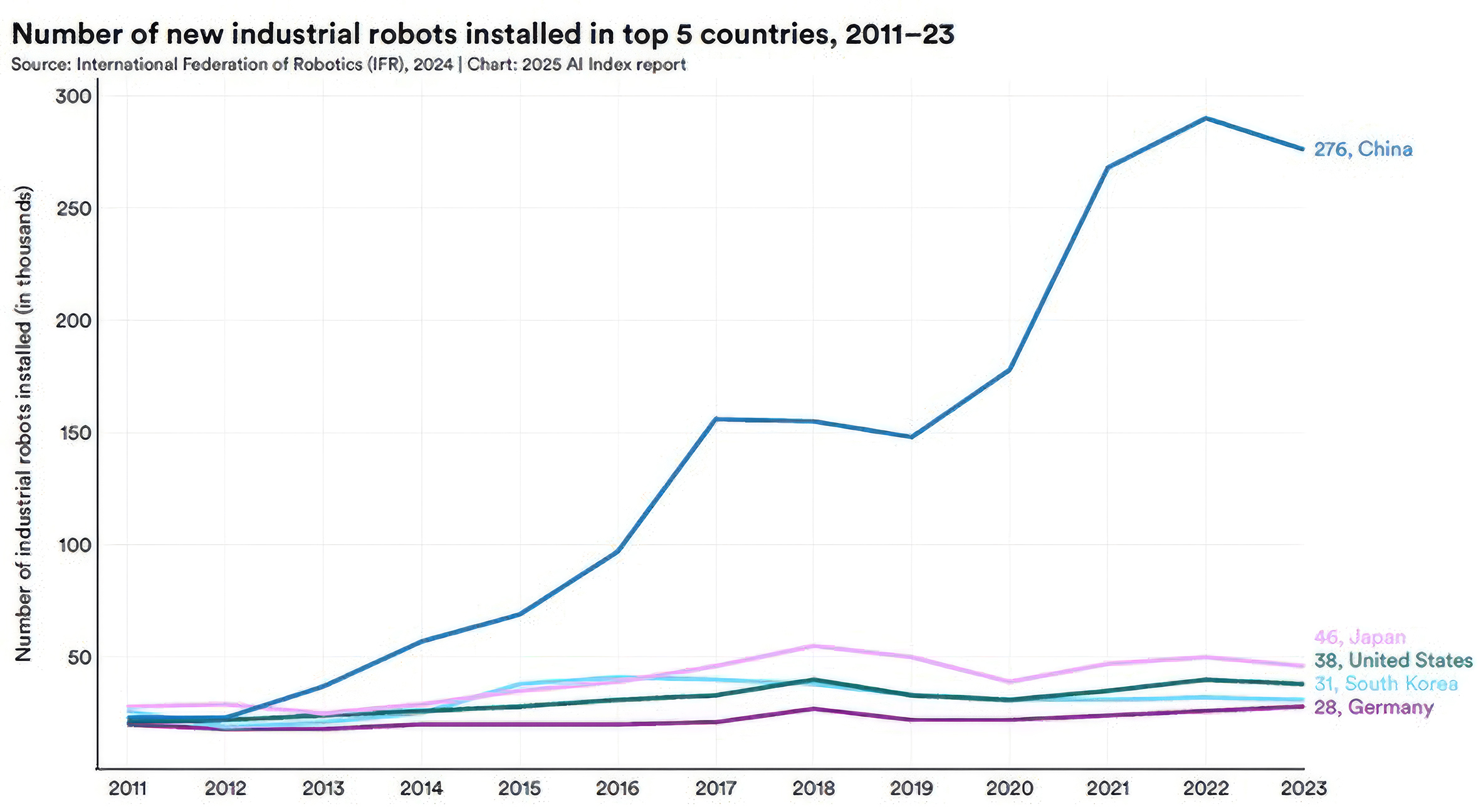

The integration of generative AI, advanced vision models and reinforcement learning is transforming this reality in industry: in 2023 alone, more than 276,000 industrial robots were installed in China (Fig. 2), which already accounts for more than half of the world's installations, and the proportion of collaborative robots has quadrupled in six years. Today's robots perceive their environment through real-time computer vision, interpret instructions in natural language, plan complex sequences of actions, adapt to unforeseen changes without reprogramming, and learn from each interaction to continuously improve. AI turns rigid industrial machines into autonomous systems capable of operating in unstructured environments and performing tasks that previously required human intelligence.

Humanoid robots: from the lab to the factory floor

Humanoid robotics has taken a quantum leap in the last two years, moving from spectacular lab demonstrations to real industrial deployments.

Figure AI, a startup valued at approximately $39 billion, completed an 11-month deployment of its Figure 02 robots at BMW's Spartanburg (South Carolina) plant in 2025. The two humanoid robots worked 10-hour shifts Monday through Friday, accumulating 1,250 hours of operation, loading more than 90,000 sheet metal parts to 5-millimeter tolerances in 2 seconds per part, and contributing to the production of more than 30,000 BMW X3 vehicles.

Figure has launched its third generation, the Figure 03, designed specifically for volume production. The company built BotQ, a manufacturing facility dedicated to humanoid robots with an initial capacity of 12,000 units per year and a goal of producing 100,000 robots in four years. The Figure 03 incorporates inductive wireless charging (2 kW via foot coils that allow the robot to simply step onto a base to recharge), a redesigned vision system with double the refresh rate and one-quarter the latency of the previous generation, and integrated palm cameras for redundant visual feedback during fine manipulation. The company projects that these robots will operate using its proprietary Helix AI, massively trained with teleoperation data and human demonstrations.

For its part, Tesla is aggressively ramping up production of its Optimus humanoid robot. The company announced plans to build a production line capable of manufacturing one million units annually, with startup expected toward the end of 2026. Elon Musk stated in October 2025 that Optimus version 3 will have "hands that are an incredible piece of engineering" with full human range of motion (22 degrees of freedom) and that the robot will be so realistic that "you'll need to touch it to believe it's really a robot". Tesla produced several thousand units in 2025 for internal use in its factories (primarily battery and component handling tasks), and plans to scale to 50,000-100,000 units in 2026. Musk estimates that, at volumes above 1 million units per year, Optimus' production costs will drop below $20,000, roughly half the cost of an equivalently scaled Model Y. The retail price, however, will be significantly higher and determined by market demand.

Boston Dynamics, a historic reference in dynamic robotics, retired its hydraulic Atlas (famous for backflips and parkour) in April 2024 and launched an all-electric Atlas designed for real industrial applications. The new Atlas integrates high-performance custom actuators with range of motion that exceeds human capabilities: its head and torso can rotate 180 degrees independently, its joints have extreme flexibility, and it is designed to exploit its own mechanical anatomy, not to be limited to human postures, although many of its control capabilities are trained from human movement. Boston Dynamics emphasizes that Atlas will prioritize speed and efficiency over anthropomorphic appearance.

In August 2025, Boston Dynamics and Toyota Research Institute demonstrated Atlas operating through Large Behavior Models (LBMs): end-to-end policies trained with extensive demonstrations and language annotations that coordinate locomotion and manipulation simultaneously. A single behavior model directly controls the entire robot, treating hands and feet almost identically, without separating low-level locomotion control from manipulation control. In public videos, Atlas performs continuous sequences of sorting and packing tasks in simulated factory environments, reacting autonomously to unexpected physical disturbances (such as researchers closing boxes or pushing objects) without interrupting the task. Boston Dynamics plans pilots with Hyundai in 2026 and limited commercial production starting in 2027.

Beyond manufacturing and logistics, humanoid robotics is opening a second front of impact: the care of older, dependent or disabled individuals. In economies facing accelerated structural ageing, this application has strategic relevance comparable to that of industrial use, with its own ethical and regulatory implications that existing frameworks have only just begun to address.

Strategic implications and operational challenges

The integration of AI into humanoid robotics introduces structural changes in manufacturing and logistics. Productivity increases radically: robots operate 24/7 without fatigue, breaks or performance variation. Recurring operational costs (energy, preventive maintenance) are predictable and decreasing with economies of scale, although the initial investment remains significant.

However, critical challenges arise. The impact on employment is real and concentrated: repetitive manual tasks in manufacturing, assembly and material handling face accelerated automation. Organizations adopting humanoid robotics must manage job transitions, reskilling programs, and increasing regulatory and societal expectations about corporate responsibility, challenges shared with the broader adoption of Artificial Intelligence more, but which here carry greater sectoral concentration and social visibility.

Vendor dependence intensifies: companies adopting robots are tied to their proprietary ecosystems of hardware, software, AI upgrades, and technical support. Technological obsolescence is rapid: a robot purchased today can be surpassed in capabilities by the next generation in two years, raising questions about investment cycles and upgrade strategies.

And new operational risks emerge: autonomous systems operating in physical environments shared with humans can cause injury, property damage or critical operational disruptions if they fail. Robotics with AI requires robust safety frameworks (redundant sensors, emergency shutdown systems, dynamic exclusion zones), certified fail-safe protocols, and effective human supervision even in nominally autonomous operations.

AI in robotics is not a future trend, it is an operational reality undergoing accelerated industrialization. Organizations that strategically evaluate when and where to adopt humanoid robotics (not all tasks justify the investment) will capture sustainable competitive advantages.

Fig. 2. New industrial robots installed. Source: Stanford (2025).

|

Table ofcontents

Introduction

Executive Summary

The Technological Explosion of AI

Case Study: GenMS™ Sybil

Conclusions

References & glossary